Having a website on its own won’t get you traffic. And sometimes, it’s difficult to pinpoint why you’re not getting the traffic you want.

For that reason, you might think about improving your SEO. You could hire an in-house SEO specialist, a marketing agency, or a freelance SEO. Or you could try to do it yourself.

Wondering where to start?

Understanding the types of SEO out there can help you choose the right services to get your website in the spotlight. It can also empower you to fix these issues yourself and then hand the rest off to a specialist.

What Are the Types of SEO?

Three main types of SEO are:

- On-page SEO: Anything you do with website content to improve rankings, such as using relevant keywords

- Off-page SEO: Anything you do outside of your website to improve rankings, such as building backlinks

- Technical SEO: Anything you do on the technical side of things, such as improving page speed

Let’s break down these types a bit more.

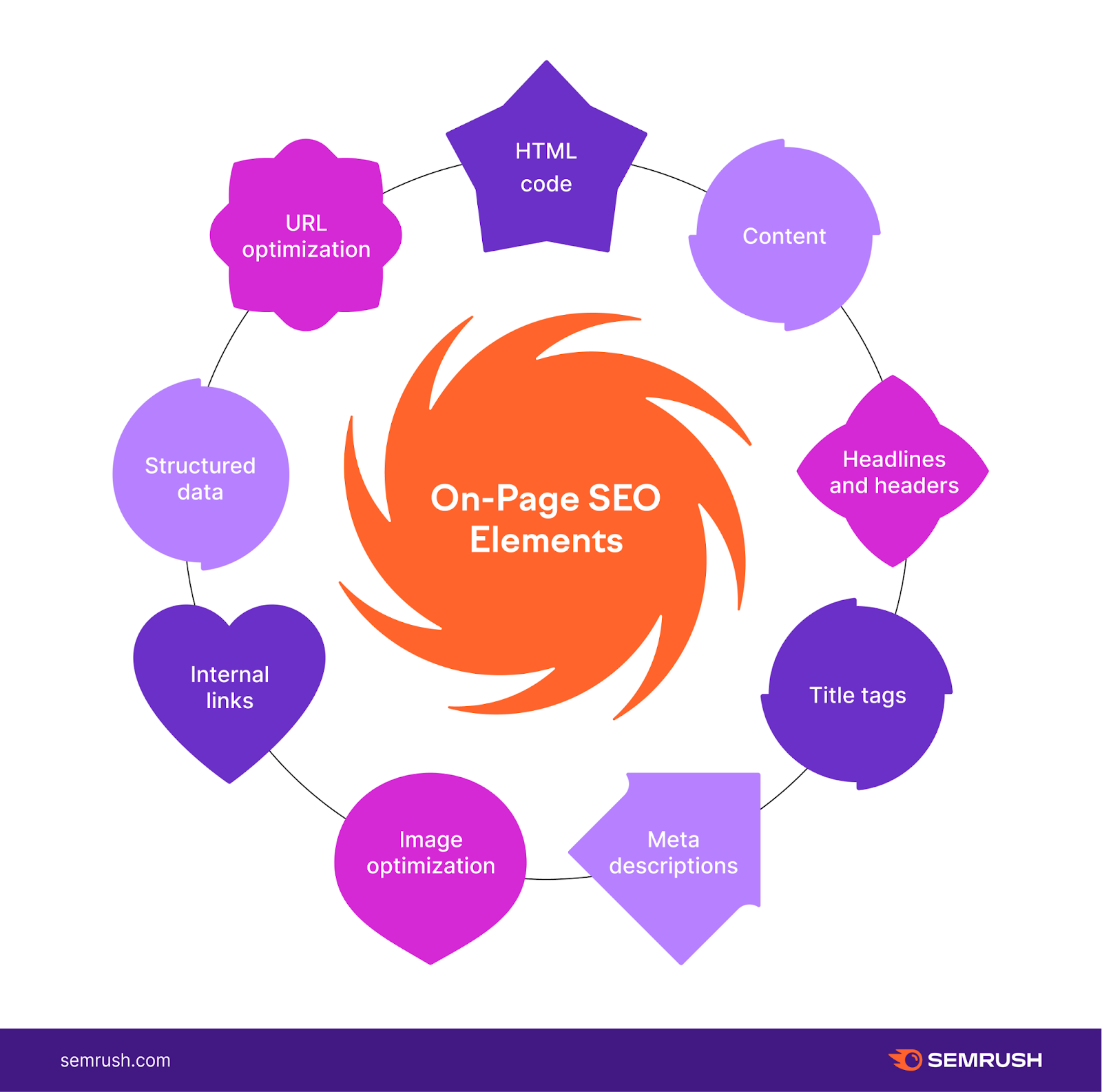

On-Page SEO

On-page SEO deals with the content on your website. In order to rank on search engines and acquire traffic, your website needs to have content that can be easily found and understood. On top of that, Google stresses that it prefers content that is helpful.

These services could look like:

- Assigning a target keyword to each important webpage on a site

- Optimizing page titles to target those specific keywords

- Updating item descriptions of an online store

- Adding fresh content to a blog

- Updating the structure of an old article or service page

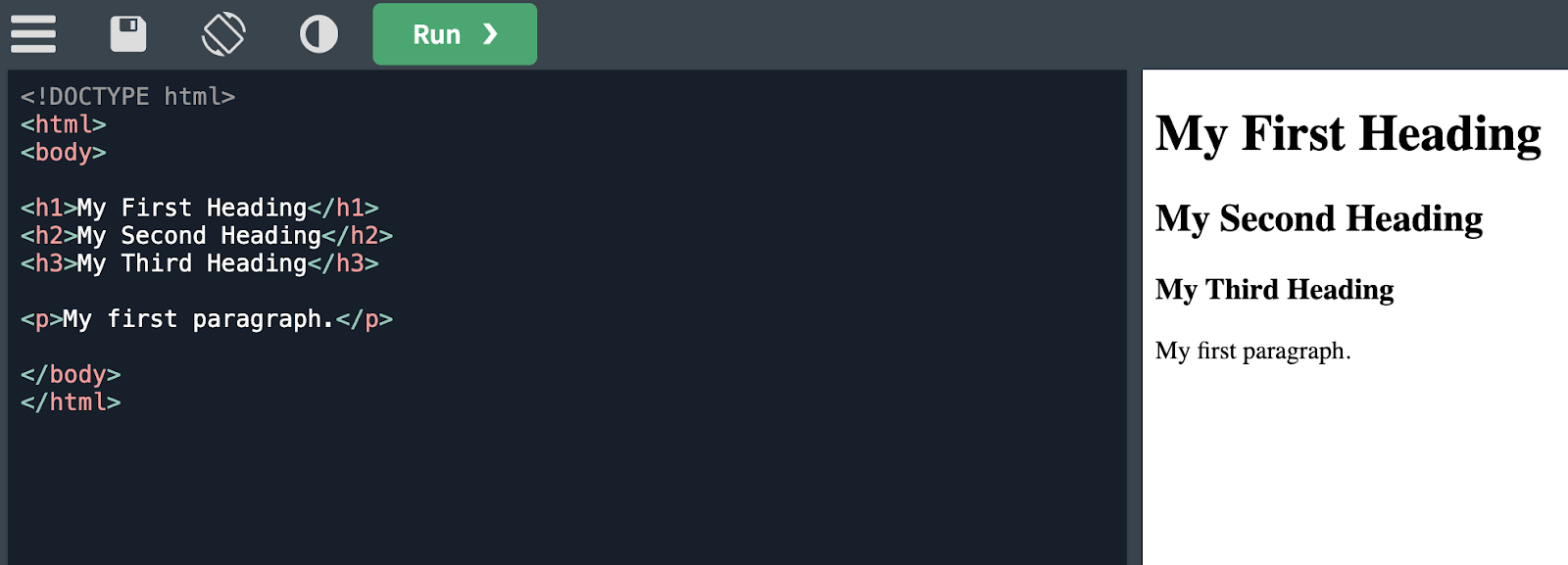

- Adding structure to content through the right HTML tags

- Coming up with a content plan for each stage of a customer journey

If the content of your website isn’t targeting keywords or is generally unhelpful, you might need better on-page SEO.

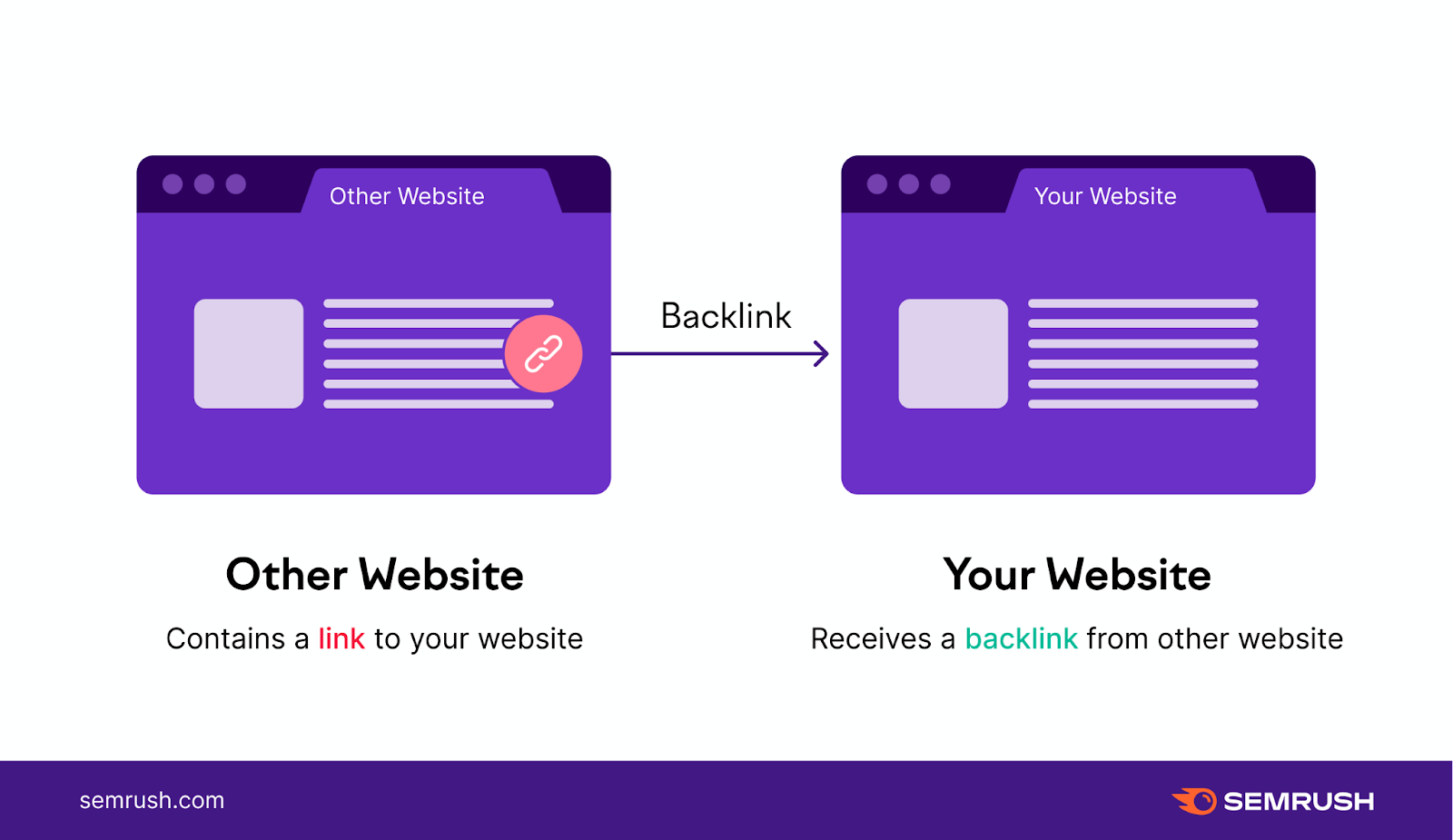

Off-Page SEO

Off-page SEO is exactly what it sounds like. It’s all the factors happening off your website and on other parts of the Internet that could influence your site’s rankings.

One major aspect of off-page SEO is having high-quality backlinks.

Here’s an example of how you can build up your brand’s off-page SEO.

First, you conduct research and publish an informative article about cat allergies on your website. Then, another website cites your article in their own study about common allergies with household pets. They refer to you as a source with a link to your website.

That link would become your backlink and could boost your SEO. Search engines, like Google, see them as a good sign, especially if they’re from reputable sites.

Off-page SEO is more than just getting backlinks. It also could look like:

- Managing your social media presence

- Conducting outreach and digital PR

- Creating content for other platforms, like YouTube

- Managing reviews

- Keeping an eye out for spammy backlinks that could hurt your SEO

If nobody is visiting your website because nobody is talking about it, then you might need better off-page SEO.

Technical SEO

Technical SEO focuses on backend factors on your site that impact crawlability, user experience (UX), and site speed. Good technical SEO lets search engines and people easily understand and navigate your site.

Slow-loading pages, broken images, or difficult navigation, on the other hand, hurt the user experience and are considered “bad technical SEO.”

Some facets of technical SEO include:

- Conducting a technical site audit

- Finding and fixing duplicate content

- Using robots.txt file to tell Google how to crawl your site

- Having a clear sitemap

- Optimizing code and file size to encourage fast-loading pages

- Managing a crawl budget

- Migrating a website

- Making the site mobile-friendly

A key to technical SEO is making sure your site is comfortable for both people and search engines. If a human can’t navigate to your site’s most important pages, search engines can’t either.

So, something such as having a clear and useful site architecture is an example of a technical SEO factor that helps humans and search engines.

Other Types of SEO

Other than those three major buckets of SEO, there are plenty more ways to improve your visibility on search engines.

Here are a few more ways to improve SEO that don’t always fall neatly into one of the categories above.

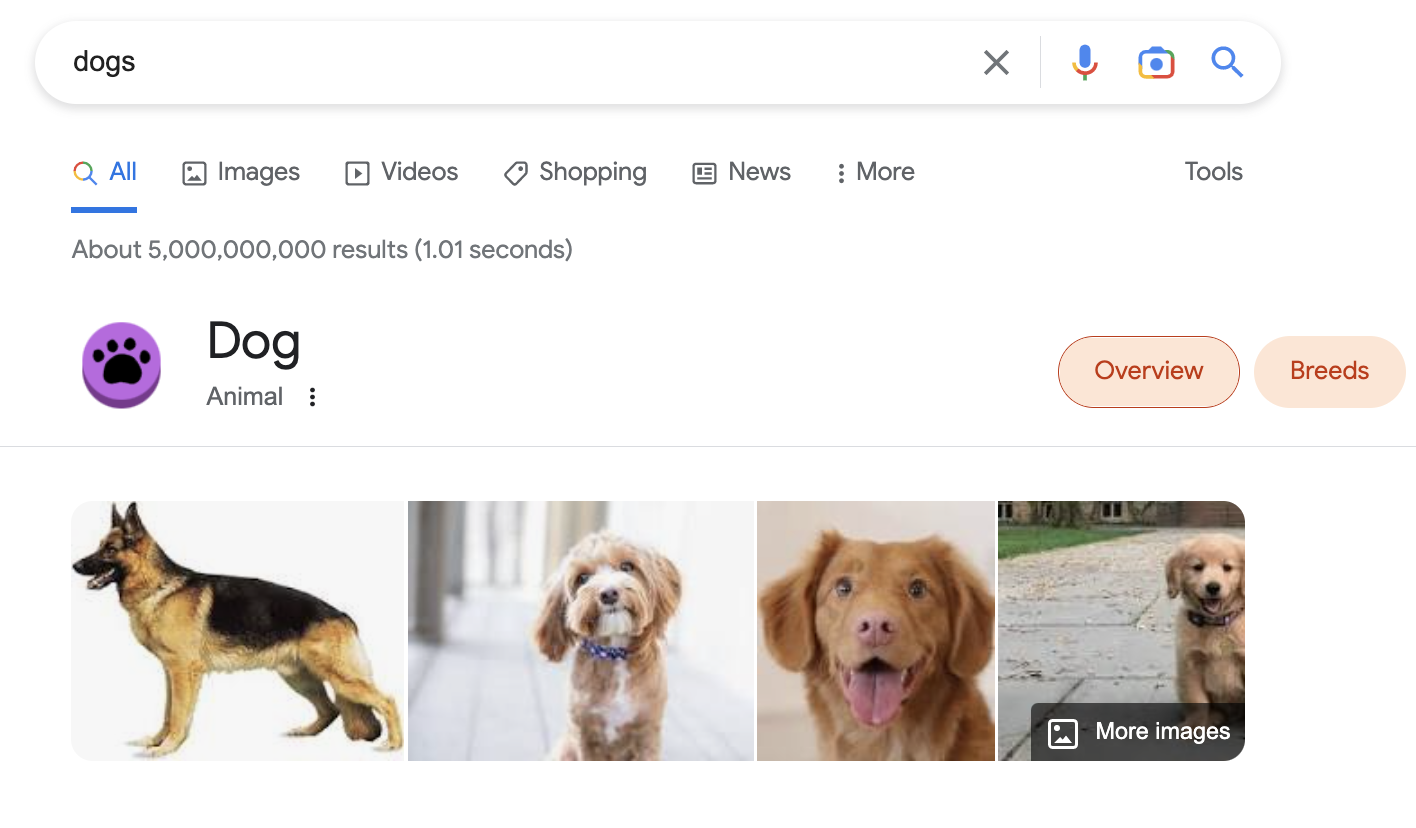

Image SEO

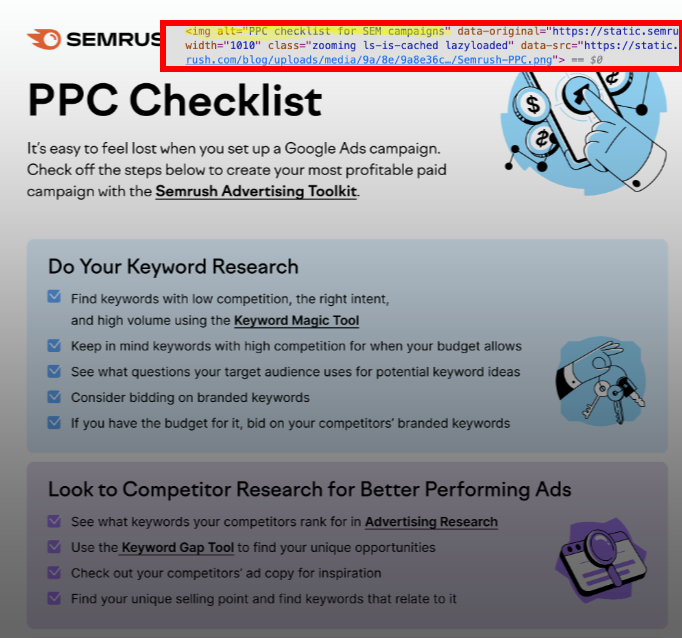

Yes, your images need to have optimized HTML tags, just like the rest of the content on your website.

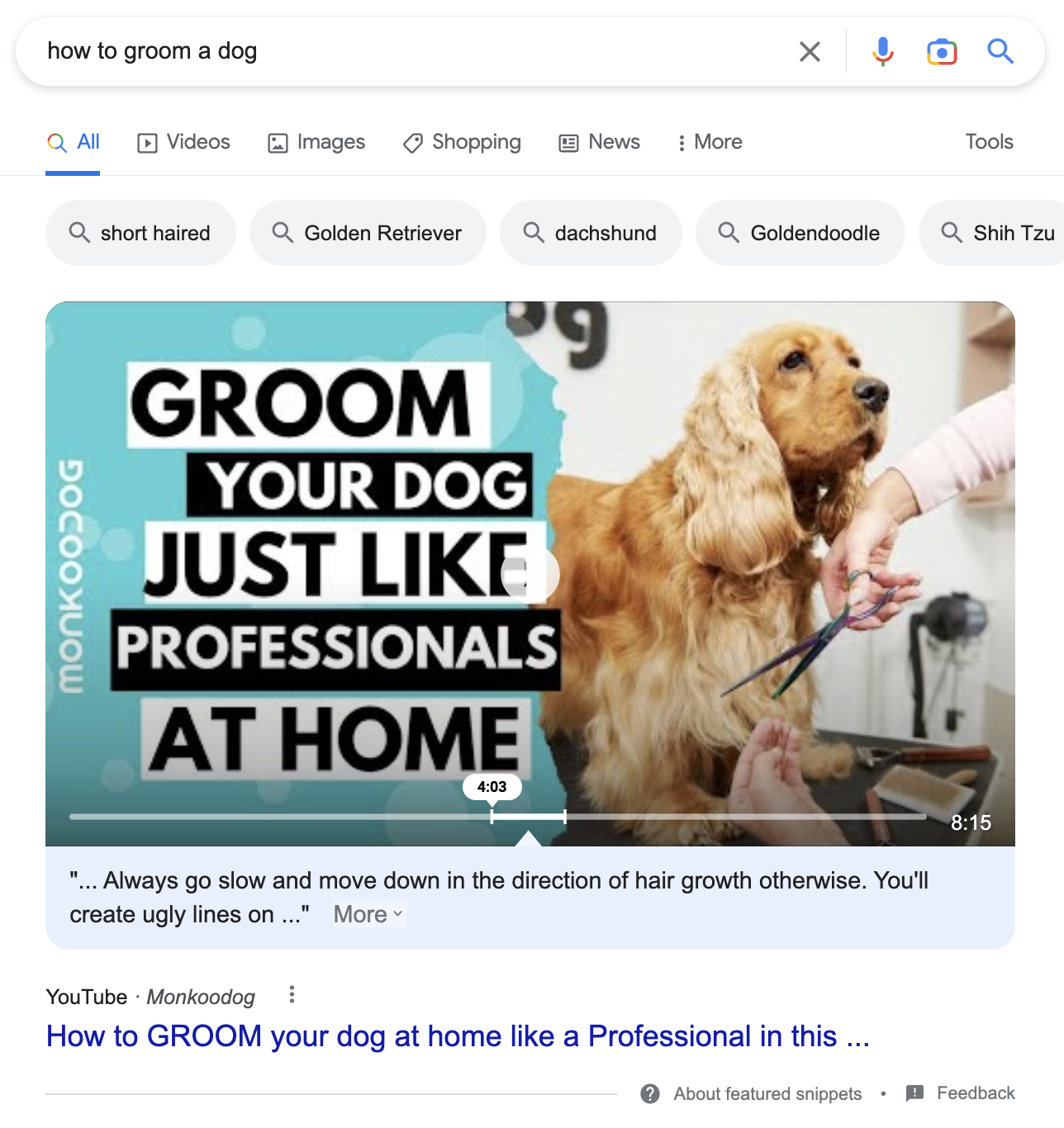

Image SEO helps the images on your website show up in Google SERP features like below:

And since it’s right at the top of the SERP, an image could lead a searcher straight to your site. Google’s John Mueller has even talked about optimizing your image filenames for search:

“We do recommend doing something with the filenames in our image guidelines.”

He also recommends filling out alt text (what shows up when an image is broken and helps those who have a vision impairment) and making sure the surrounding text is relevant.

Video SEO

Video SEO relies on optimizing parts of a video such as its:

- Title

- URL

- Tags

- Description

And there’s more to it. You can also add a few lines of structured data to your site’s code to help your videos get seen on search engines through SERP features.

Structured data helps Google understand important information about your website—including video content. YouTube automatically adds structured data with options like key moments.

The image above uses key moments to have an important clip play to quickly show searchers how to groom a dog and show Google the important bits of the video.

To learn more about structured data for videos, you can read our guide on it here.

Different video SEO techniques can help get more views on YouTube, which is a niche of SEO in itself.

Ecommerce SEO

Stores that sell products or services online can use ecommerce SEO to get more online traffic—and more sales too.

Boosting an ecommerce site in the search engines is more than just updating product titles and descriptions. It may also mean optimizing a brand on a platform that isn’t their own (like on etsy.com or amazon.com).

Since most ecommerce markets are going to be really competitive, making sure your site’s SEO is top-notch will be even more necessary to compete for online shoppers.

Mobile SEO

A popular study by bankmycell.com found over 83% of the world owns a smartphone. Brands can’t succeed without taking the time to make sure their sites work on all platforms.

A main part of mobile search optimization is making it easy for searchers to use your site on their phones. That goes back to technical SEO–optimizing your code to make sure the mobile version of the site is fast and easy to use.

Another facet of mobile SEO is finding keywords that people are searching for on their phones, which may be different from how they look them up on a desktop. If you want to find those keywords, you should conduct mobile keyword research.

Local SEO

Businesses that have physical locations can use local SEO to get more foot traffic. Optimizing content on your site and on platforms like Google Business Profile (aka Google My Business) can help put your brand in front of an interested local audience.

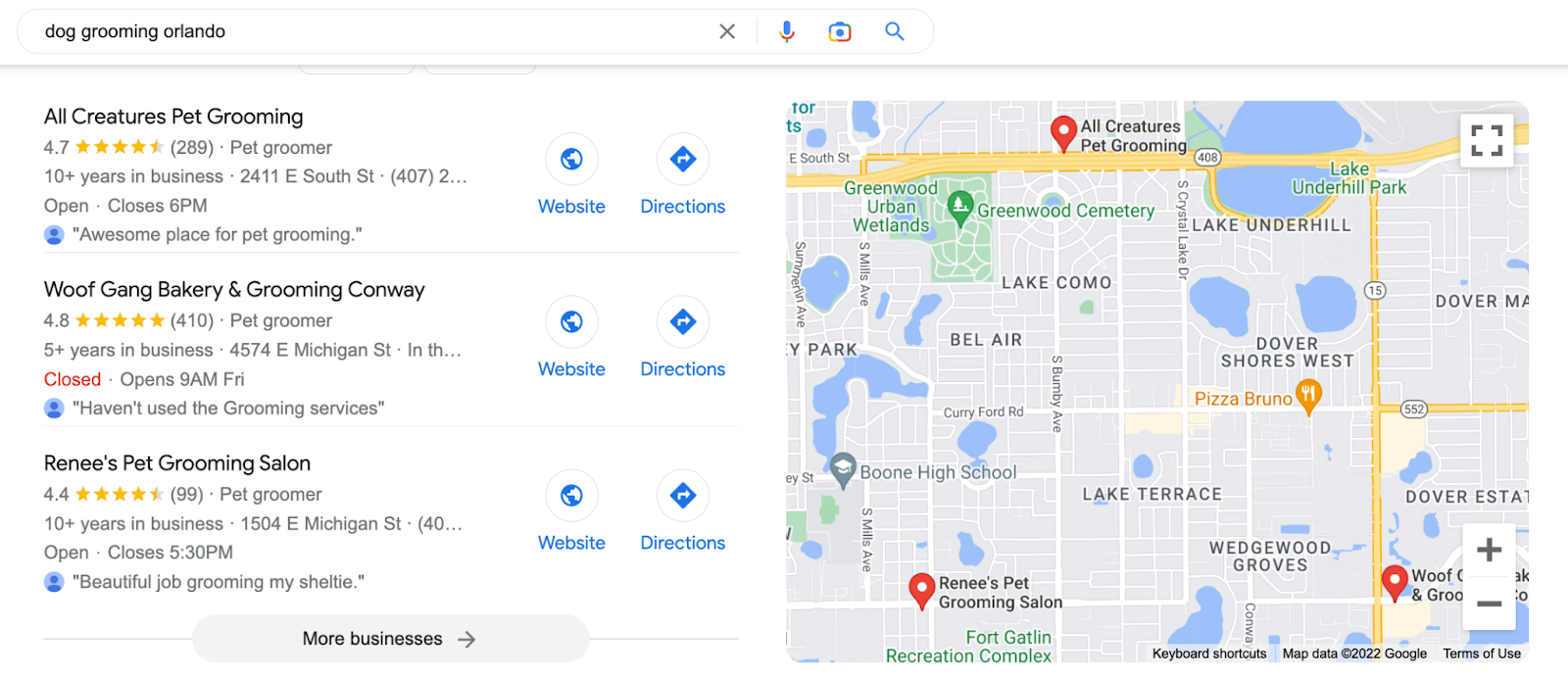

Let’s say you need to get your dog groomed in the city of Orlando:

The options you see when you search on Google are the businesses with the best local SEO.

A dog grooming business in Orlando would need to improve its local SEO to show up in a Google search engine result page (SERP) feature like the one above.

Local SEO typically consists of (but is not limited to):

- Managing online citations

- Setting up a Google Business Profile

- Targeting and tracking local keywords

- Creating site content to target local searches

- Leveraging local connections to build partnerships and backlinks

Local businesses that want to have good SEO should still focus on their on-page, off-page, and technical SEO. The list above is about specific actions that directly affect local rankings (in addition to standard SEO best practices).

How to Figure Out What Kind of SEO Services You Need

Again, “good SEO” is subjective and depends on the audience and competition in your market.

The best SEO plan for an online kid’s clothing store would look quite different than the best plan for a large hospital.

Because there are so many ways to improve SEO, you’ll have to do some thinking to determine what’s best for your site and your brand.

Here’s how to figure out what kind of SEO services you might need:

Do a Content Audit

Running a content audit will tell you how the content on your site is performing–good and bad.

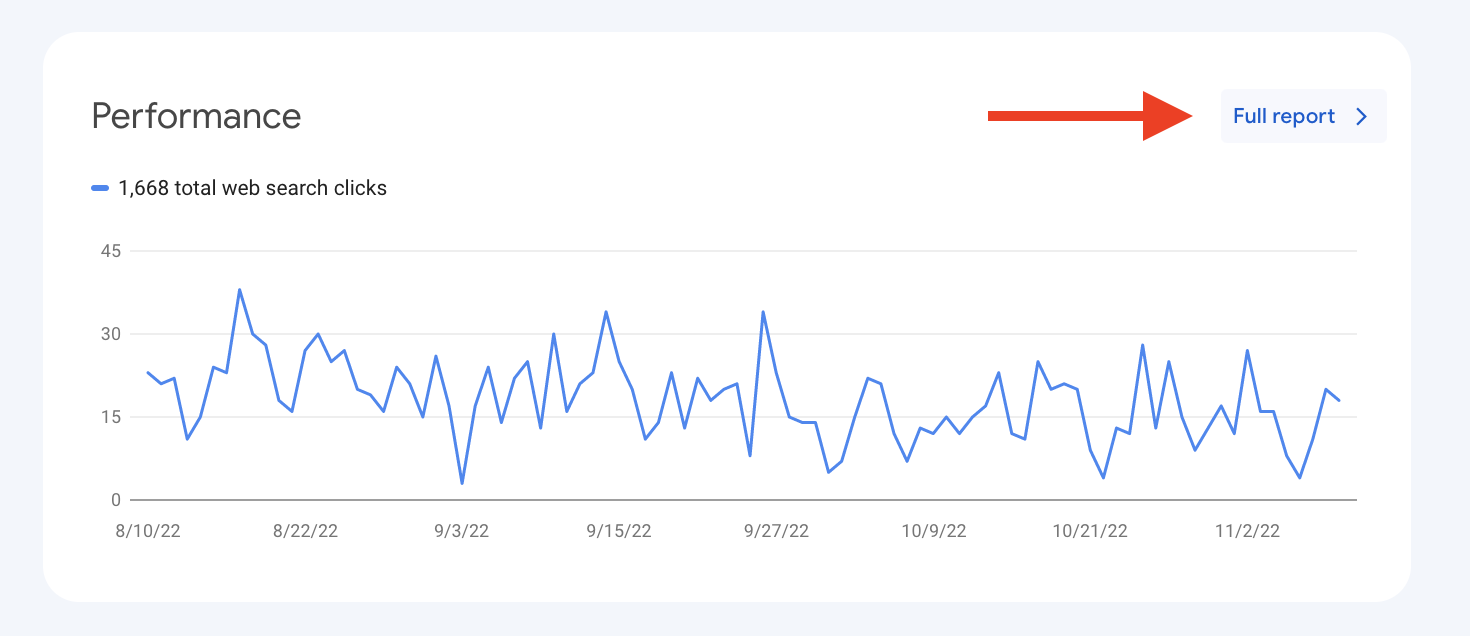

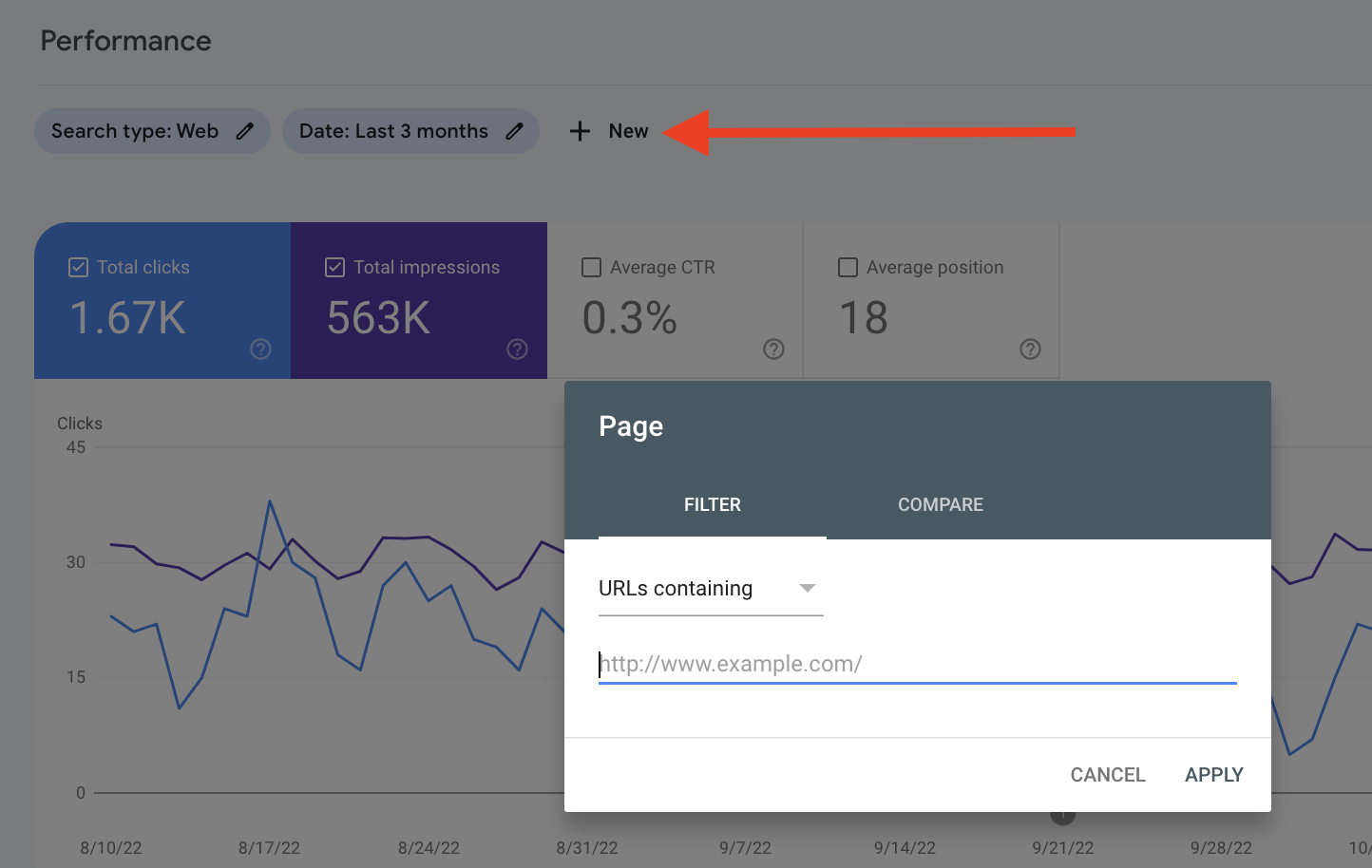

You can use Google Search Console to see which content could use some sprucing.

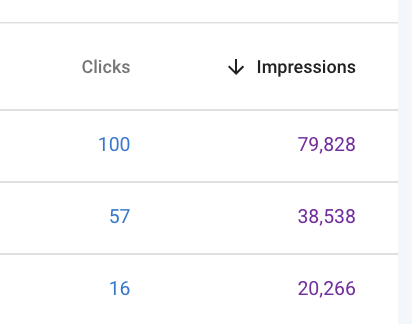

Click on the full report on the main dashboard under Performance.

Add the URL of the page on the site you want to improve.

Then sort by impressions. See which content has a bunch of impressions for a keyword, but not many clicks.

This could be an opportunity to improve the content for this keyword.

Run a Technical Audit

You can also run a free technical audit of your website with Google Search Console.

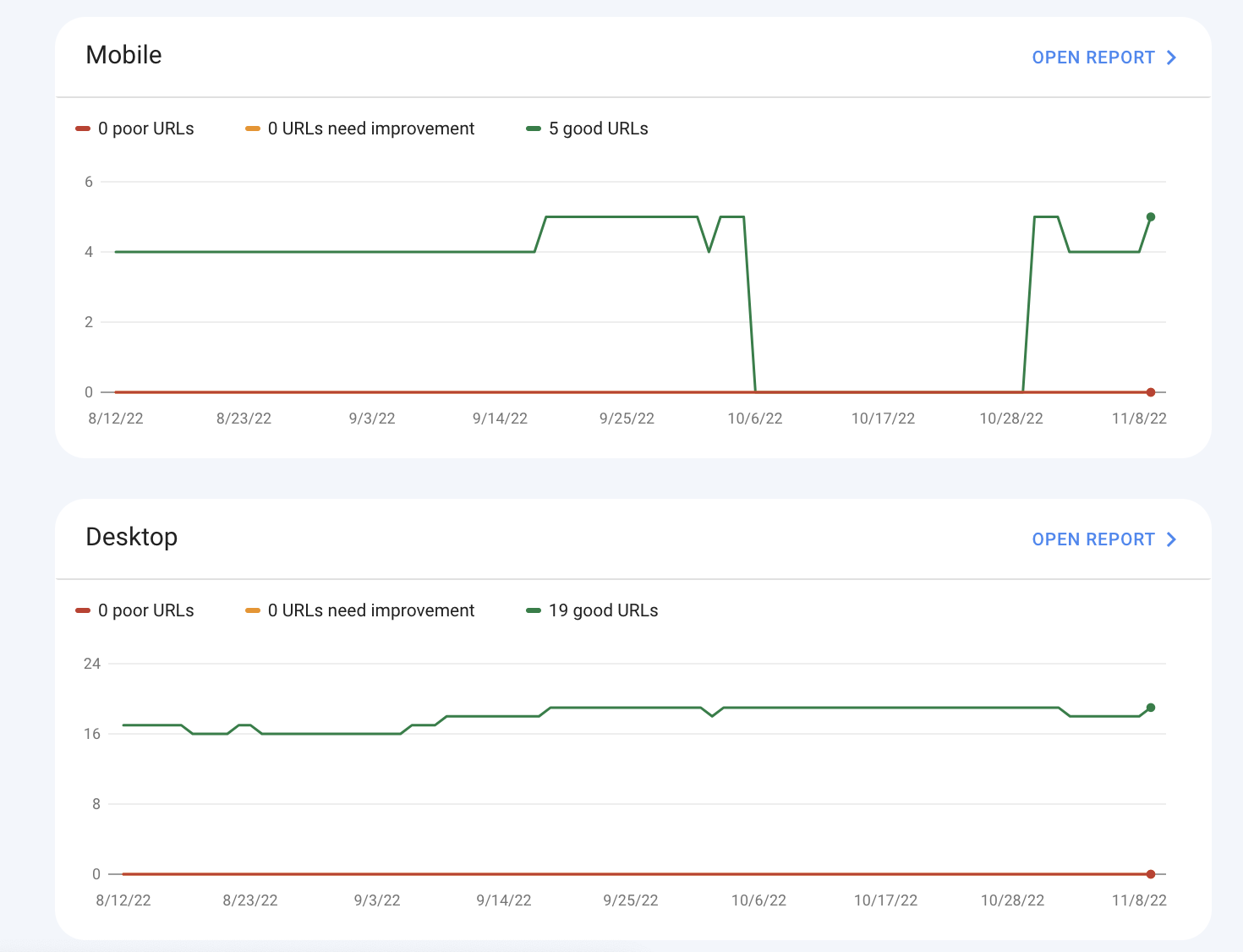

Go to the dashboard and click on Core Web Vitals.

This report will show you if you have technical issues on your website that could be scaring off people from your site and hurting your SEO.

Want to know more than what Google Search Console can tell you?

Try out Semrush’s Site Audit to identify issues with your technical SEO. Even if you don’t have a subscription, you can sign up for a free plan today and run a technical audit of your site.

Run a Technical Audit with Semrush

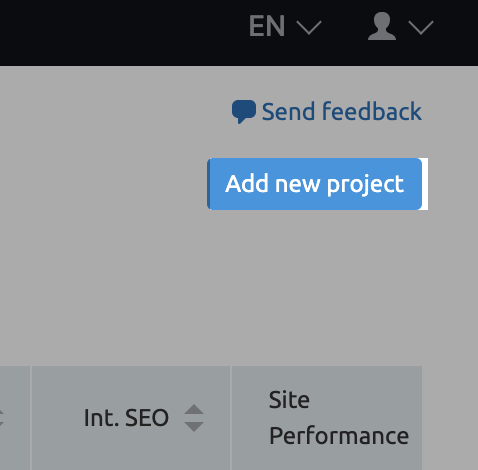

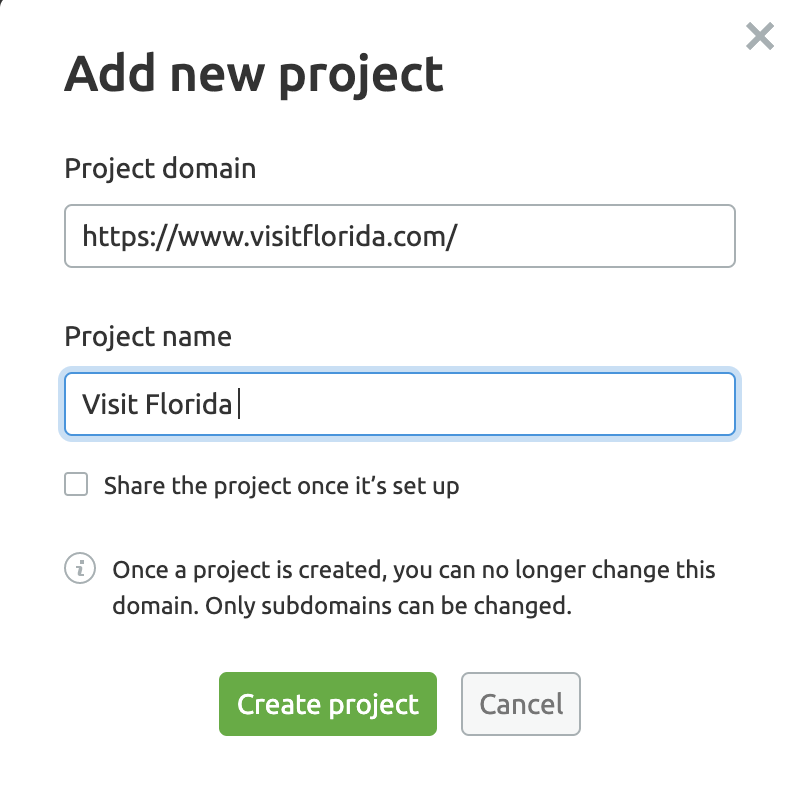

Go to the Site Audit tool page and add a new project (or go to your existing project) to launch the tool.

Add a new project by entering the domain you want to analyze and add a project name. Then, create the project.

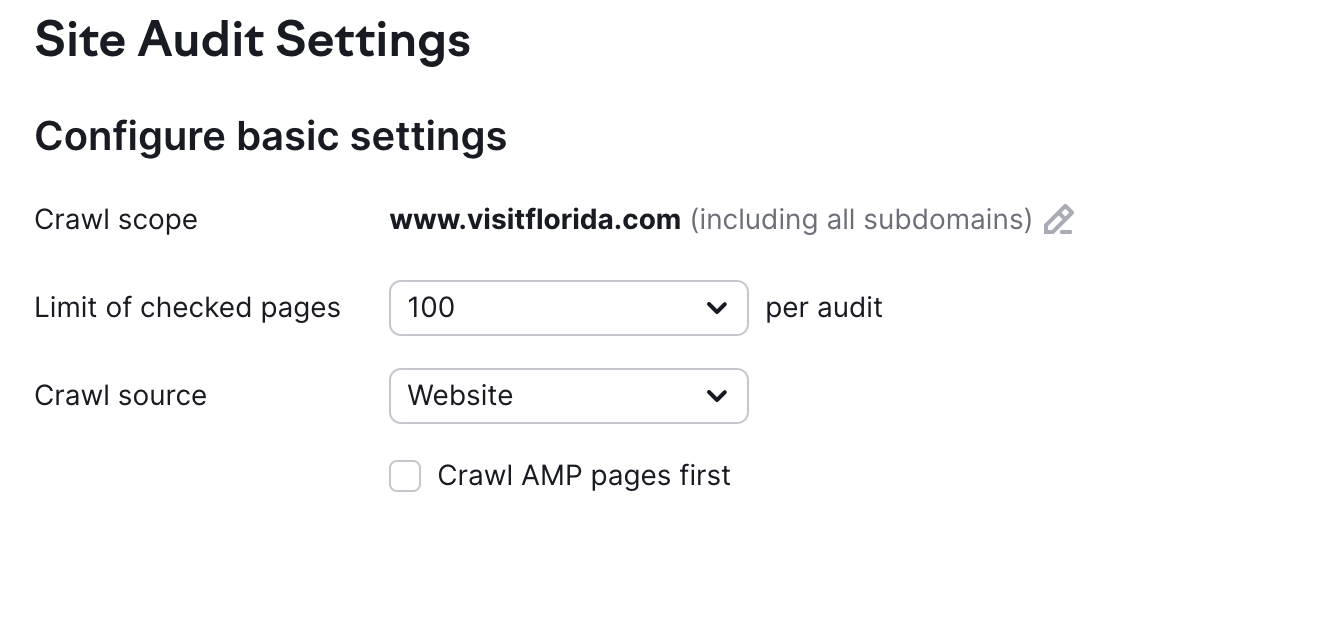

Set up how many pages you’d like to audit (free subscribers get 100 per audit).

You can stop here or customize it to:

- crawl certain URLs

- change the crawl speed (set up a delay)

- the kind of user agent the audit should crawl as (i.e. Google bot for mobile versus desktop)

- regularly run and report audits

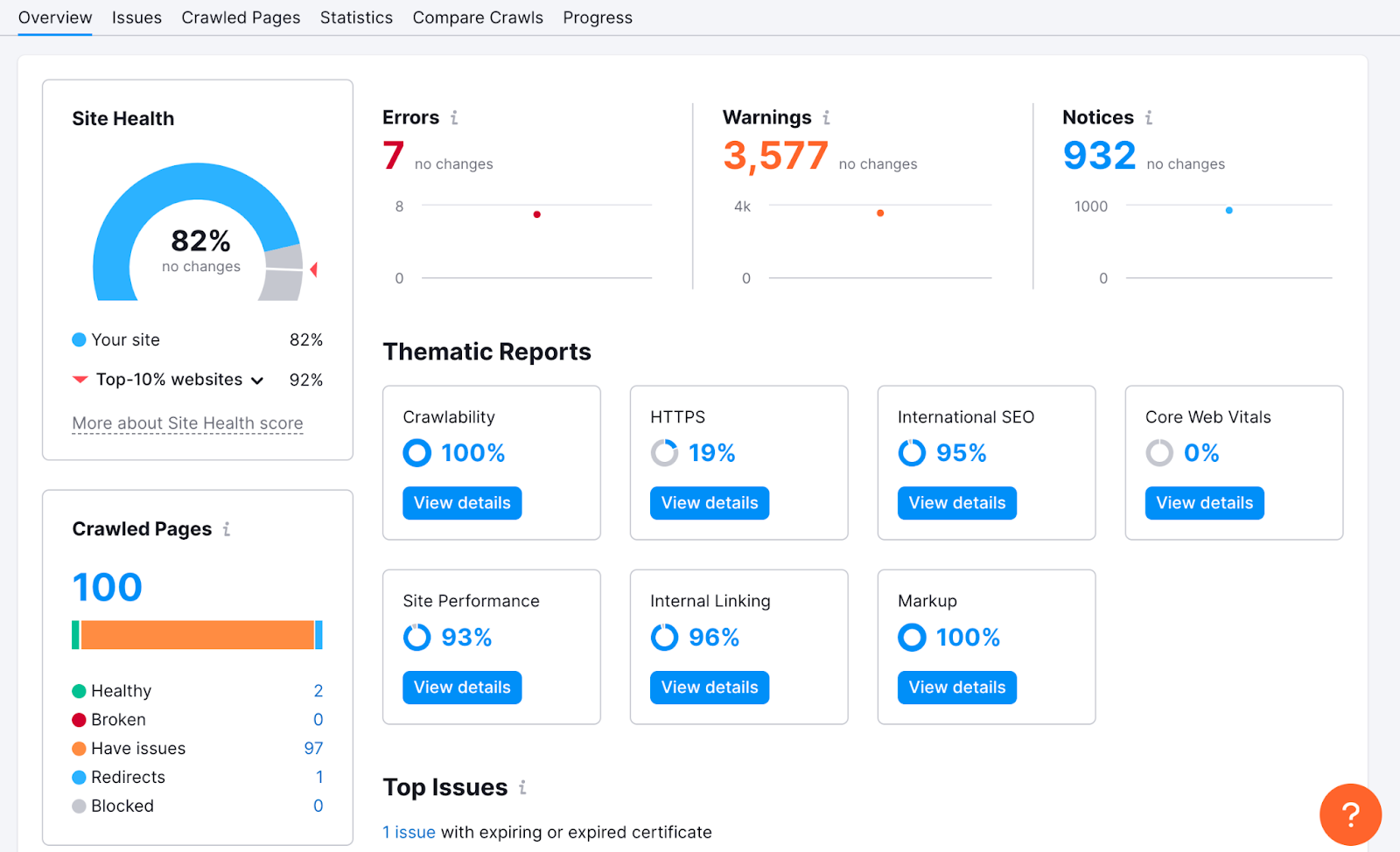

Click on the name of your project once it’s done running. It’ll take you to the Overview where you’ll see a detailed report of your site’s health.

The results of this audit will give you plenty of jumping-off points to identify your biggest SEO issues and see where to start making improvements.

Audit Your Backlink Profile

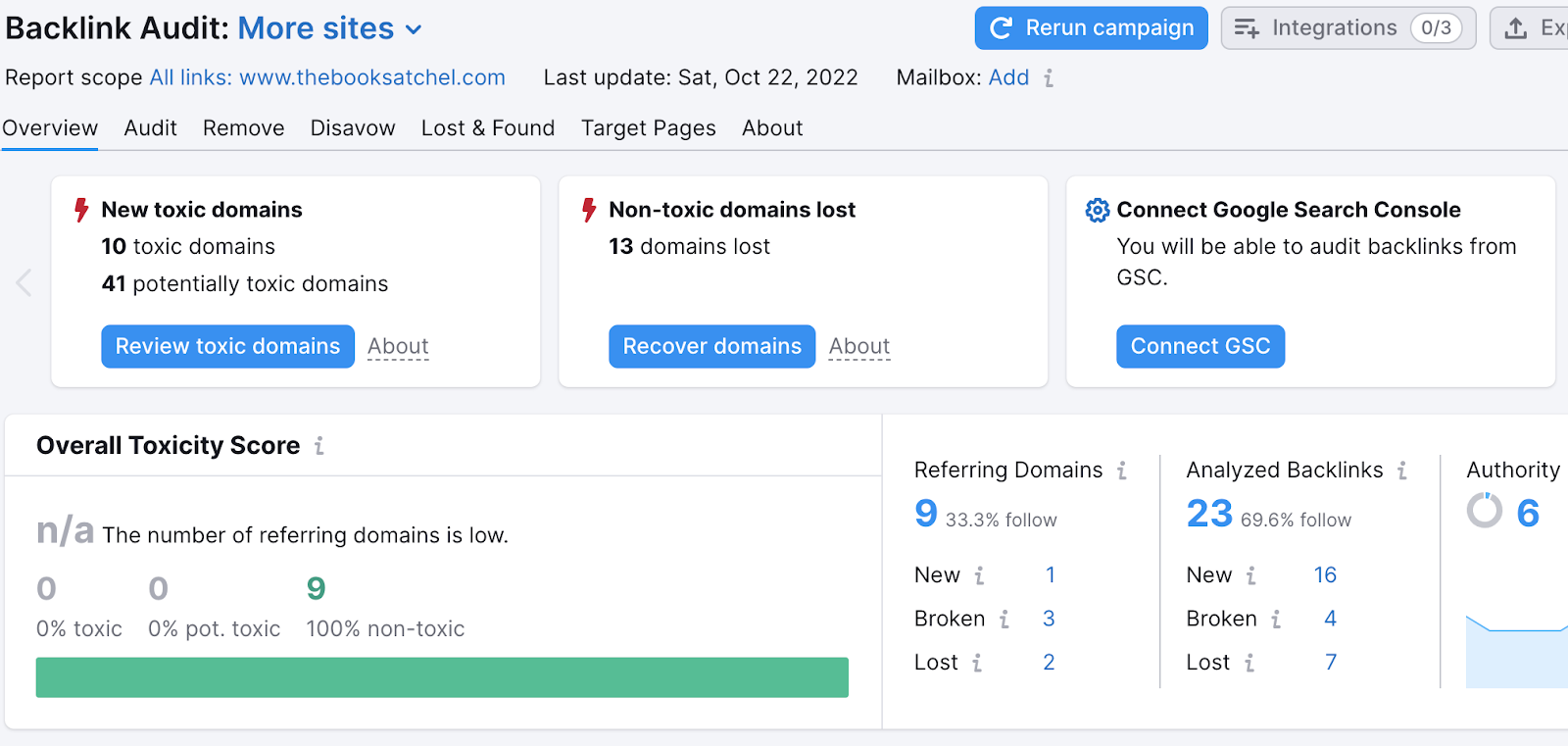

Check out your site’s backlink profile to see if it has reputable backlinks or spammy ones that may hurt your off-page SEO health.

The Semrush Backlink Audit tool (also available with a free Semrush subscription) will give you actionable recommendations on how to get a stronger backlink profile.

You can pass these recommendations directly to an SEO specialist.

What Types of SEO Services Are Out There?

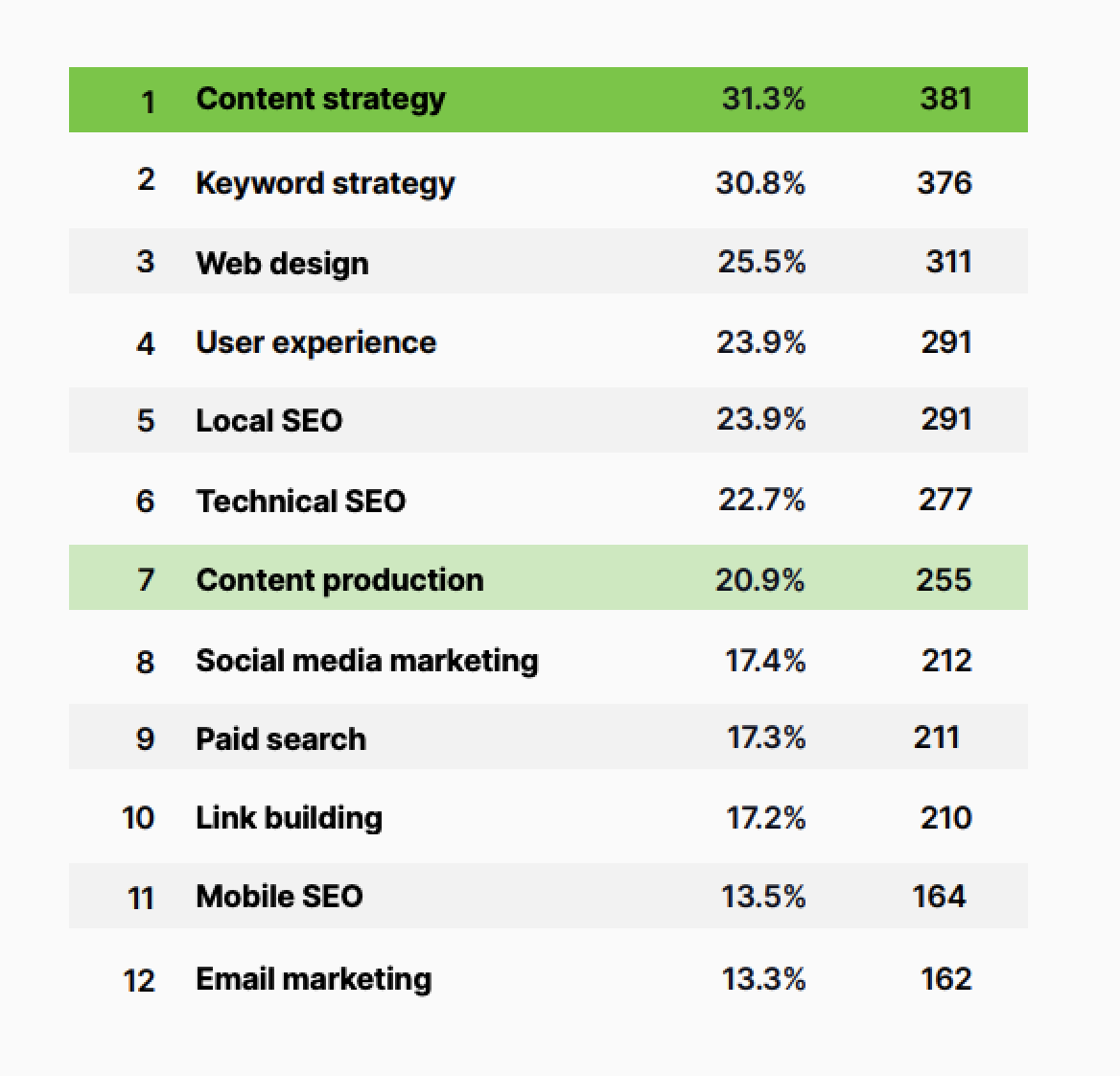

If you know you want to hire an SEO provider, but you’re not sure what services to ask for or expect, a 2021 survey by Search Engine Journal might help you.

This survey asked hundreds of marketing professionals in 2021 about the most requested digital marketing services from that year:

Notice how most of them are a type of SEO we spoke of before. We’re going to continue to cover the services we went over earlier.

If you don't know what to ask for when talking with an SEO agency, you could mention one of the above services.

Link Building

Remember backlinks and how they can boost SEO?

One popular SEO service is to create link-worthy content and find the right audience for it. A specialist might do this in several ways.

Some are:

- Writing public relations releases

- Guest blogging

- Publishing new, interesting research

- Conducting an outreach campaign to build new online connections and backlinks

So, part of it is creating material that people would want to link to. The other part is researching and outreaching candidates to pitch said content to.

If you’re considering hiring a link building service, be sure to ask them about how they’re sourcing their prospects, what tactics they will use to reach out and build links, and how many links they expect to build for you.

It’s also important to build links of high quality rather than just quantity.

Web Design, User Experience, and Web Development

Sometimes you need to make large changes to help with your site’s SEO and create a better experience for visitors. A web developer or designer might work on your site’s:

- Speed and load time

- Indexability and crawlability (how easy it is for search engines to find and navigate your site)

- Mobile SEO

They’d run a technical audit to identify problem areas and then fix them.

Other common services in this area include:

- Migrating a website to a new domain

- Migrating themes

- Finding patterns of when/where visitors exit a site

- Creating a sitemap and robots.txt file

- Creating clean site architecture

- Auditing and cleaning up heavy code

- Fixing duplicate content issues

Content Marketing (Keyword Strategy, Content Strategy, Content Production)

The Oxford English Dictionary defines content marketing as:

“A type of marketing that involves the creation and sharing of online material (such as videos, blogs, and social media posts) that does not explicitly promote a brand but is intended to stimulate interest in its products or services.”

Here’s an example of how dental digital marketer, Mark Oborn, used content marketing to improve a client’s conversion rates.

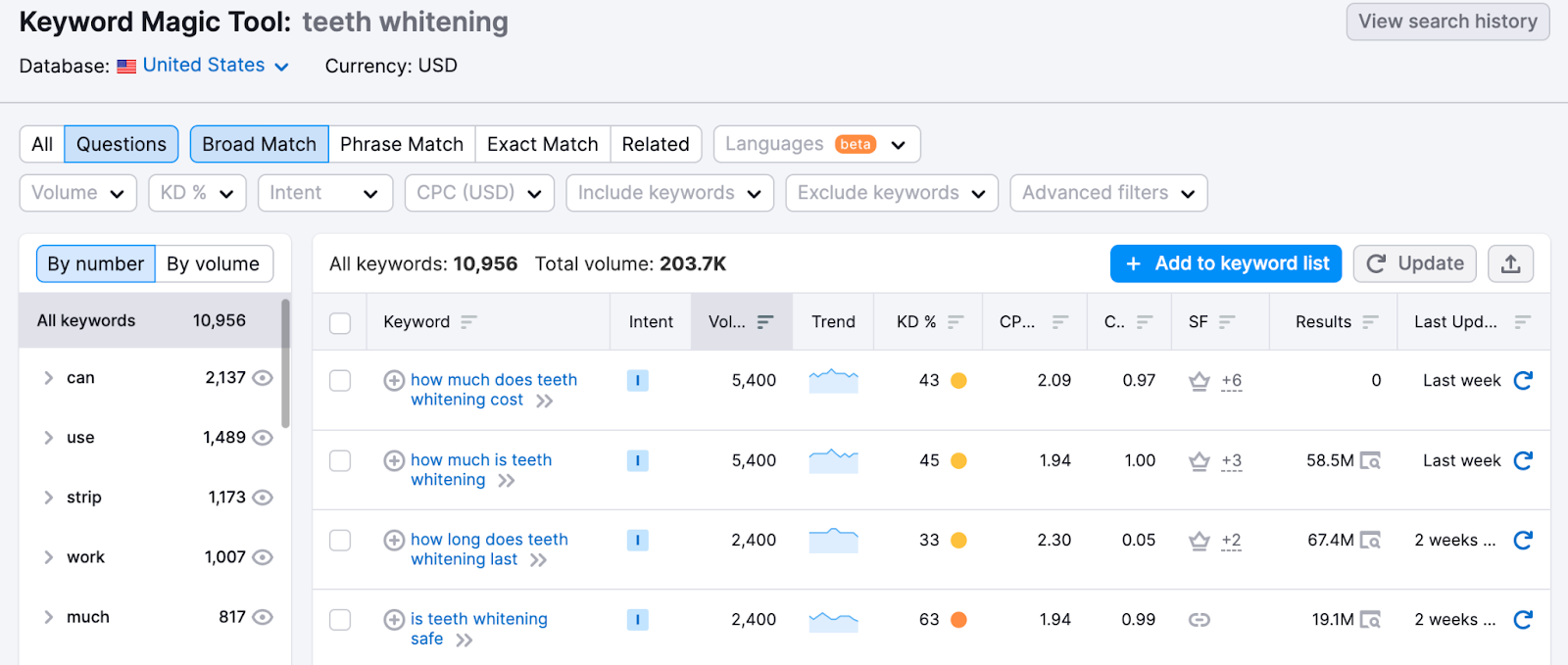

The start of his content marketing strategy was to explore keywords for a service his client wanted to rank for—"teeth whitening.”

He researched keywords that could work as good blog topics. In this way, he came up with a keyword strategy.

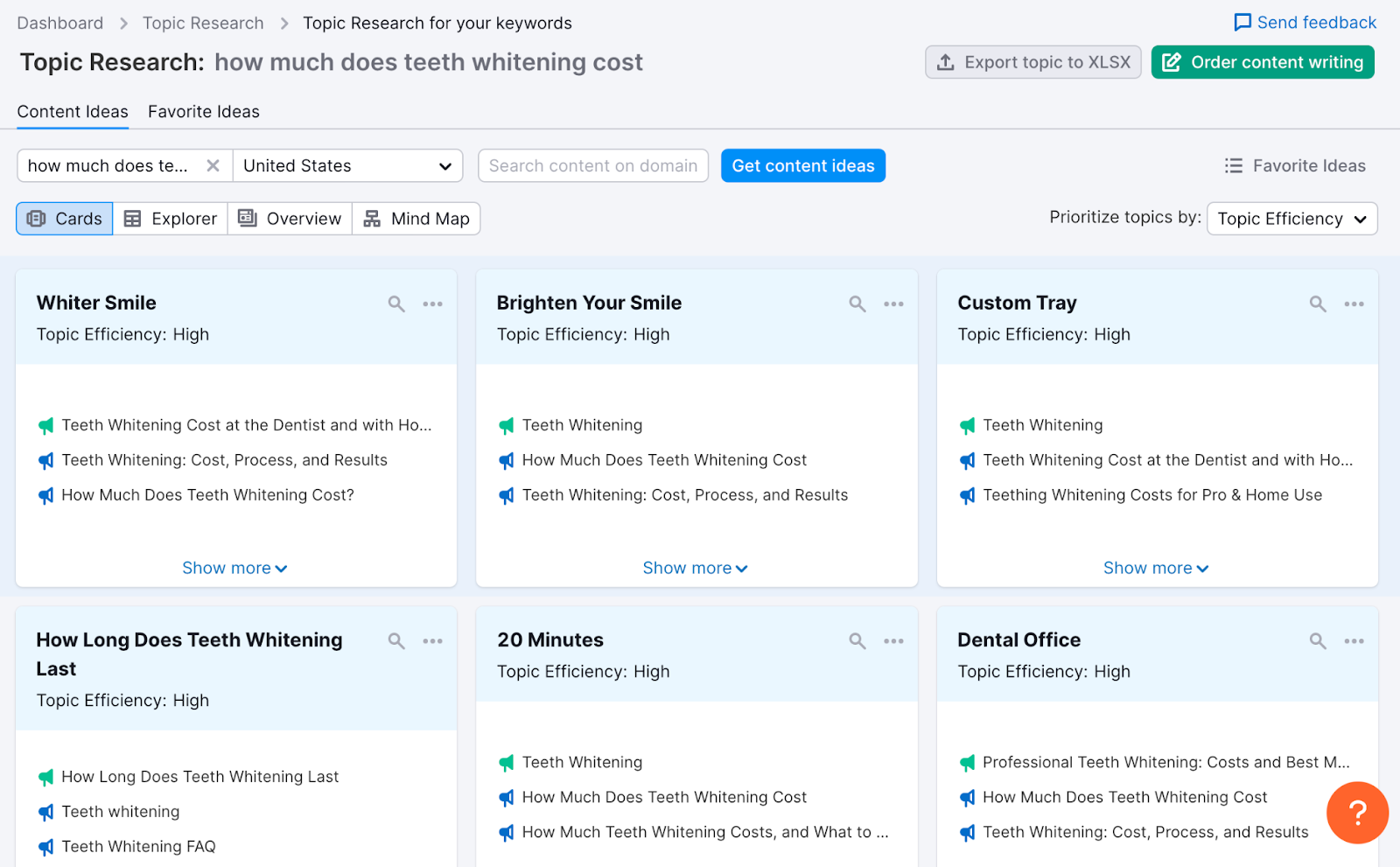

Mark Oborn then took his keyword strategy to form a content strategy. Using the Topic Research tool, he found relevant subtopics and potential headers.

A strategy on its own will sit there, it needs someone to actually produce the content.

A good content writer/developer will use keywords like this in your strategy to shape a piece of content that’s optimized for search.

Content specialists may also offer services to update old pieces of content on your site that aren’t doing well. They might improve a site’s on-page SEO by suggesting the right words to use in the site’s meta tags.

You can learn more about content marketing and free tools to help you create optimized posts in this guide.

Online Reputation Management & Social Media Marketing

Your online reputation can be your site’s best friend or foe.

How so?

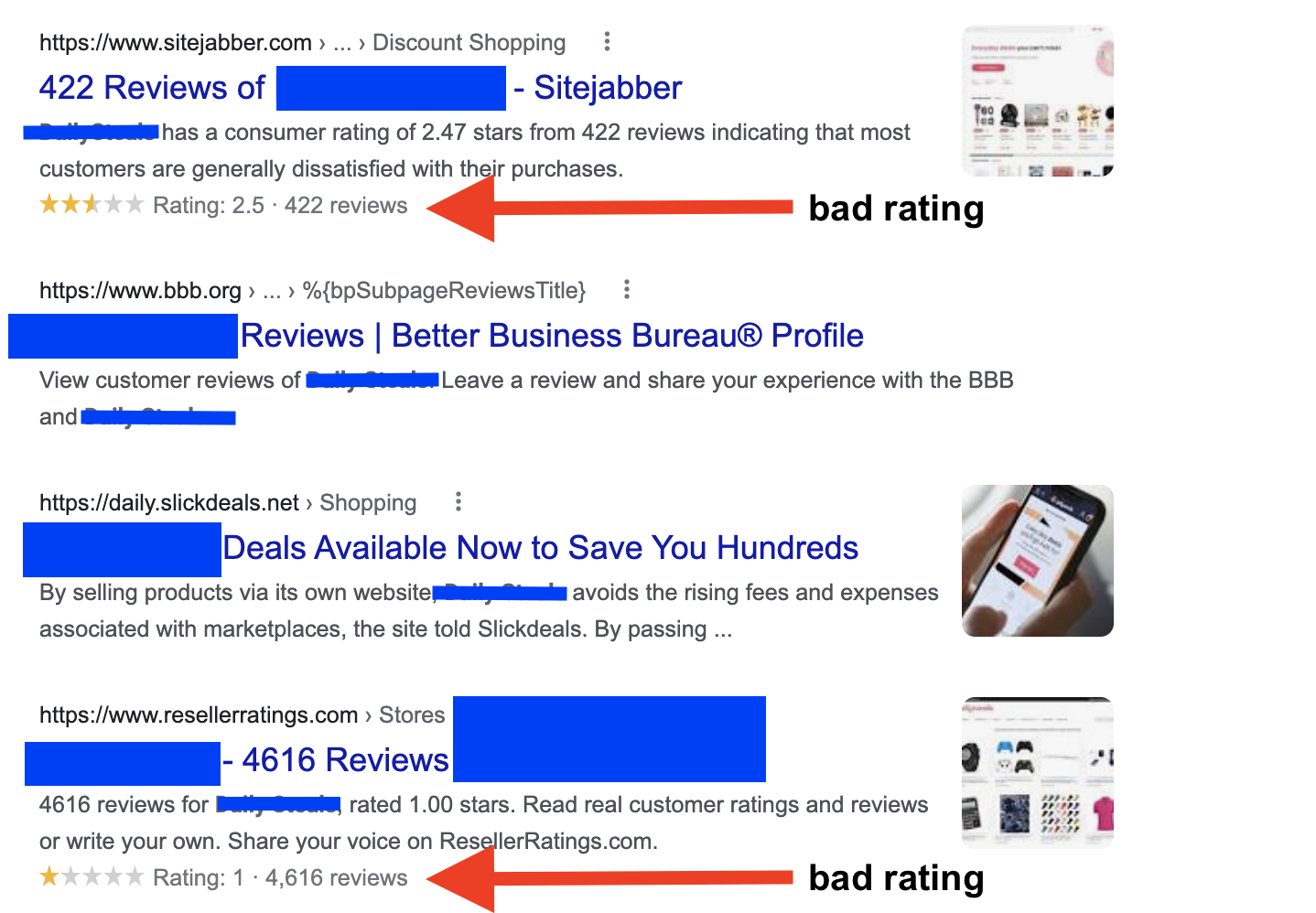

Here’s an example. When a Google search of a brand name leads to bad reviews on the first search engine results page (SERP)...it’s not a good look.

A specialist in this field could help respond to any reputation crises happening around your industry or brand online.

There’s more to it, though. Managing a bad online reputation also means fostering a good one.

This includes:

- Responding to and managing bad reviews

- Encouraging good, honest reviews

- Setting up social media accounts and posts to improve engagement

- Getting mentions from other brands and influencers

These actions would help people understand what they can expect from your company, and they also help build your off-page online presence.

Most importantly, they’ll track post performance to see which content is actually leading viable traffic to your website.

Conclusion

We just dropped a lot of information on you. But start by identifying which of the four main types of SEO your website lacks. An easy way to start diagnosing your needs is with a free Semrush Site Audit. Then, dive into potential services and tasks to help you in each area.

Here’s what figuring it out may look like:

- Do you have an old site with lots of tech problems slowing things down? Look into technical SEO.

- Do you have a new site without a lot of content on it? Content marketing could help.

- Do you have some cool content but people don't know about it yet? Try optimizing your off-page SEO.

- Do you need to get your website in front of a relevant audience in your local area? Local SEO can help.

The more you know about the various types of SEO services, the easier it will be for you to work with someone (or work on your own) and improve your online visibility.