What Are Google Search Operators?

Google search operators are special commands you can use to find more specific information in Google.

Like this:

The “site:” operator allows you to search for results from a specific website.

In this example, including “site:semrush.com” in your search query will show results only from semrush.com.

Many other Google search operators can make your search results more precise. They also have many practical uses for SEO.

In this post, we’ll guide you through different search operators. And show you how to use them for SEO activities. Such as:

- Building internal links

- Finding websites for guest posting

- Finding site indexing issues

Let's get started.

List of 35+ Google Search Operators

Here are the search operators Google supports:

intitle:

Searches for pages that contain a specific word in the title tag.

Try it out: intitle:pizza

This will show pages with the word “pizza” in the title tag.

allintitle:

Works like “intitle'' but will only show pages where the title tag includes all of the specified words.

Try it out: allintitle:pizza recipe

related:

Allows you to find sites related to a particular domain.

Try it out: related:nytimes.com

Googlewill show other news media sites related to nytimes.com.

OR

Finds results related to one of two search terms. In some cases, results will contain both search terms.

Try it out: pizza OR pasta

This will show pages that are related to either pizza or pasta. Or both.

Alternatively, you can use the pipe (|) operator in place of “OR.” It does the same thing.

Try it out: pizza | pasta

AND

Finds results related to both the searched terms.

Try it out: pizza AND pasta

The AND operator is usually implied in Google search queries. When entering multiple search terms, Google assumes you want to see results that include all of those terms.

So if you search for “pizza pasta,” Google will show results that include both “pizza” and “pasta” anyway.

-

The minus (-) operator excludes a particular term or phrase and shows pages that don’t include the excluded term (or terms).

Try it out: digital marketing -jobs

Google will show pages related to “digital marketing,” but not “digital marketing jobs.”

()

The parentheses “()” groups multiple terms or search operators to influence the final search.

Try it out: Tesla (Model S OR Model Y)

Google will show pages that either include “Model S” or “Model Y” in addition to “Tesla.”

*

Acts as a wild card and fills in the missing word or phrase.

Try it out: best * in Paris

Google will fill in the asterisk with different words, such as “places,” “museums,” “hotels,” “restaurants,” “tourist places,” etc.

define:

See the definition for a specific word or concept. The definition is displayed in a special dictionary box, but sometimes Google might just show websites that define the term for you.

Try it out: define:algorithm

This will serve the definition of the word “algorithm.”

filetype:

Find results of a particular file format (e.g., PDF, XLS, PPT, DOCX, etc.)

Try it out: filetype:pdf climate change

You’ll see search results for PDF files related to climate change.

Alternatively, you can use the “ext:” operator in place of “filetype:” It does the same thing.

Try it out: ext:pdf climate change

cache:

Allows you to view the most recent cached version of a webpage.

Try it out: cache:semrush.com

Google will show you the most recent cached version of our homepage.

site:

Finds results from a specific website.

Try it out: site:nytimes.com

You’ll see results only from nytimes.com.

inurl:

Finds pages that include a specific word in the URL.

Try it out: inurl:shampoo

This will return pages that have the word “shampoo” in the URL.

allinurl:

Works like “inurl” but will only return pages where the URL includes all of the specified terms.

Try it out: allinurl:best baby shampoos

weather:

Allows you to quickly see weather conditions for a particular location.

Try it out: weather:london

Google will display the current temperature, forecast, and other weather-related information.

map:

Shows a map of a specific location.

Try it out: map:new york

Google will display a map of the location. If you click on the map, it will take you to Google Maps. Where you can zoom in or zoom out and explore further.

movie:

Shows information about a specific movie.

Try it out: movie:avengers endgame

Google will display movie-related information. Like reviews, ratings, full cast and crew list, trailers, and showtimes (if it’s currently in theaters near you).

stocks:

Allows you to quickly see stock prices and other financial information of a particular company.

Try it out: stocks:tesla

Google will show the stock price, current market cap, stock chart with historic price details, and other relevant information.

intext:

Looks for pages that contain a specific word in the content.

Try it out: intext:AI

This will return pages that have the word “AI” somewhere within the content.

allintext:

Works like “intext” but will only show pages where page content contains all of the specified words.

Try it out: allintext:SEO tips

Google will show pages with both words in the content.

source:

Finds news articles from a specific source in Google News.

Try it out: tesla source:nytimes.com

You’ll see news articles about Tesla from The New York Times.

in

Lets you convert one unit to another. Applies to currency, weights, distance, temperature, time, etc.

For example, you can search for “999 USD in EUR” to see how much $999 USD is worth in euros.

Try it out: 999 usd in eur

“search term”

Using quotation marks around a search query allows you to search for an exact phrase rather than individual words.

Try it out: “best pizza in new york city”

In this example, Google will only show results that include that exact phrase, rather than “best,” “pizza,” and “new york city” separately.

AROUND(X)

Searches for pages where two words appear within the distance of “X” words from each other.

Try it out: Tesla AROUND(5) Model S

In this example, Google will return pages with words “Tesla” and “Model S” in content where they appear within five words from each other.

location:

Narrow your results to a specific location.

Try it out: location:seattle pizza

You’ll see pizza-related results specific to Seattle.

Unreliable & Deprecated Search Operators

Google search operators have been in use for years.

But did you know Google has terminated some operators? Or that some operators don’t work as effectively as they used to?

Let’s look at the non-working Google search operators. As well as the ones that return inconsistent results and shouldn’t be relied on.

blogurl:

Find all of a domain's blog URLs. The operator was useful for performing searches in Google Blog Search, which was shut down in 2011.

Example: blogurl:semrush.com

Although this operator has been deprecated, it still returns a few relevant results in a regular Google search.

#..#

Search for information within a specific range of numbers. For example, if you want to find articles about the best ’90s movies, you can use “best movies 1990..1999” as your search query.

Example: best movies 1990..1999

Our testing found that this operator returns mixed results by displaying movies for the years 1990 to 1999 but also 2000 and beyond.

inanchor:

Allowed you to find webpages that have links pointing to them using a specific anchor text.

For example, if you want to find webpages that have links pointing to them with the anchor text “books,” you can use “inanchor:books” as your search query.

Example: inanchor:books

Note: The operator no longer consistently returns relevant results.

allinanchor:

Works like “inanchor” but would only return pages where a link’s anchor text contains all specified words.

Example: allinanchor:best books 2024

Note: The operator doesn’t seem to work. You’ll often see false positives.

+

Find pages that mention a specific word or phrase exactly as written.

For example, if you search for “Semrush +team,” Google will only show you pages that have the words “Semrush” and “team” together. And not pages that have “Semrush” and “team” separately or in a different order.

Example: Semrush +team

Note: The “+” operator has been discontinued by Google. You can use quotation marks to find webpages that contain exact matches.

#

See blogs, social media posts, and news articles that used a specific #hashtag.

Example: #throwbackfriday

Note: This one doesn’t seem to work. It often returns false positives.

~

Finds pages that contain synonyms for a word or phrase.

For example, if you search for “~healthy recipes,” Google will show pages that contain words or phrases related to healthy recipes, such as nutritious recipes, low-fat recipes, wholesome recipes, etc.

Example: ~healthy recipes

Note: Google has terminated this operator. For most searches, Google automatically shows pages that include synonyms.

link:

Search for webpages that link to a specific URL. For example, if you search for “link:nytimes.com,” Google will show all webpages that link to The New York Times website.

Example: link:nytimes.com

Note: Google has deprecated this operator, as confirmed by Google’s Gary Illyes on Twitter. It doesn’t return relevant results.

info:

Find more information about a specific URL or domain. Like a cached version, similar sites, links to the site, etc.

Example: info:semrush.com

Note: Google has terminated this operator.

daterange:

Allows you to search for content that was published within a specific date range. The date range must be specified in Julian format.

Example: daterange:23001-23091 SEO

Note: We’ve found that this operator no longer works.

inpostauthor:

Search for content written by a specific author.

Example: inpostauthor:Neil Gaiman

This operator used to work in Google Blog Search, which was retired in 2011. It doesn’t work in regular Google Search.

phonebook:

Find a person’s phone number.

Example: phonebook:elon musk

Google has discontinued “phonebook:” search operator, as confirmed by a former Google employee in a blog post.

inposttitle:

Look for blog posts with specific words in the title.

Example: inposttitle:SEO tips

This operator was useful for finding relevant blog posts in Google Blog Search. It doesn’t work in regular Google Search.

How to Use Google Advanced Search Operators for SEO

Google search operators are useful for various SEO tasks. Like:

- Building internal links

- Finding site indexing issues

- Finding websites for guest posting

Let’s explore the use cases in more detail.

1. Get Internal Linking Ideas

Internal links are hyperlinks that connect one page on a website to another page on the same website.

They’re important for SEO for three main reasons:

- Internal links help users discover more content on your site

- They help search engines crawl and index your site more efficiently

- They can spread link equity (ranking power) throughout your website

To give your SEO a boost, regularly check your website for internal linking opportunities. And add links where relevant.

Google search parameters can help you generate internal linking ideas.

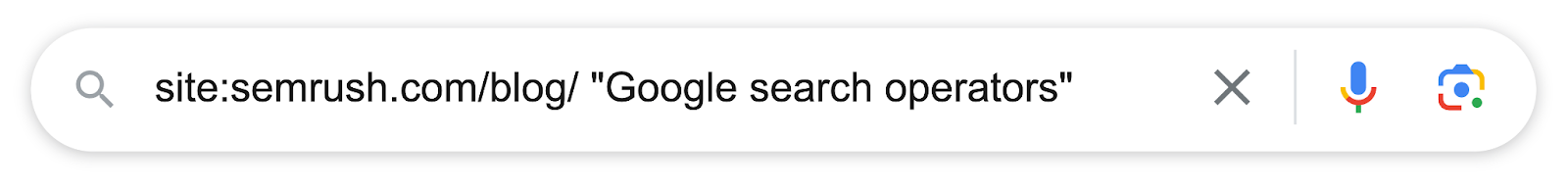

For example, if we want to add internal links to this guide, we can search Google with search operators like this:

Google will show relevant articles where we mention the phrase “Google search operators” somewhere in the content. So we can add internal links from them.

2. Find Site Indexation Issues

Indexation is the process whereby Google stores your website pages in its search index—a database containing billions of webpages.

Your webpages must be indexed by Google to appear in search results.

And to get traffic from Google.

Google search operators can check whether your website pages are indexed.

Use the “site:” search operator.

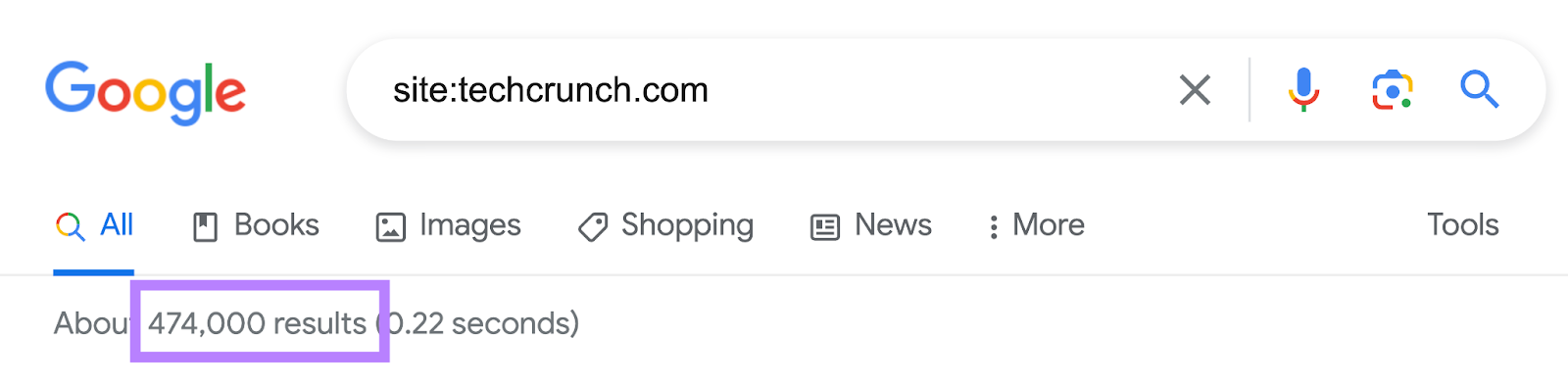

For example, if you want to check the index status of techcrunch.com, search for “site:techcrunch.com.”

This tells you roughly how many of the site’s pages Google has indexed.

Note: These are approximations. If you want to know exactly how many pages of your site Google has indexed, check Google Search Console.

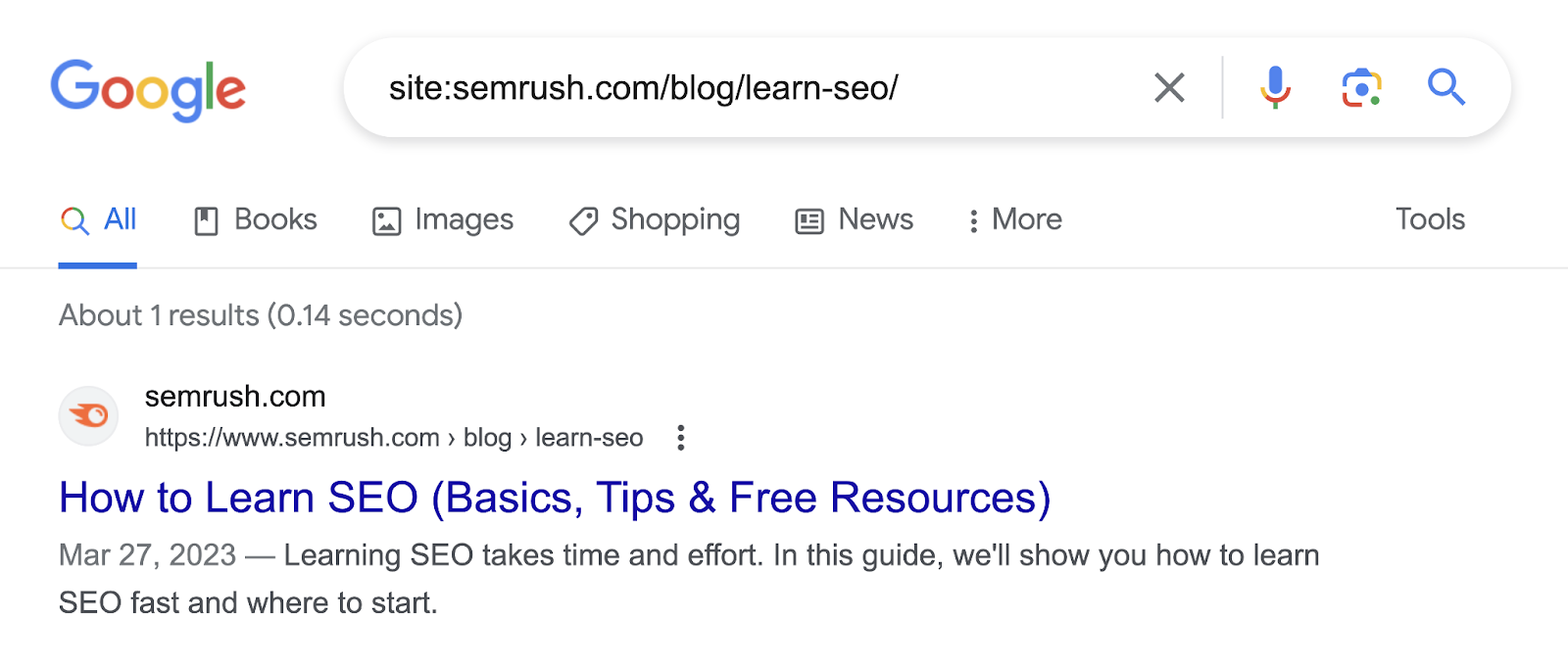

You can also review whether specific pages are indexed by searching the page URL with “site:” search.

This is helpful to confirm whether Google has indexed the new articles you’ve published on your website.

For example, we recently published a new guide to learning SEO.

To confirm whether Google has indexed it, we can search for “site:semrush.com/blog/learn-seo/”

As you can see, the page appears in Google search results. Which means there are likely no indexation issues.

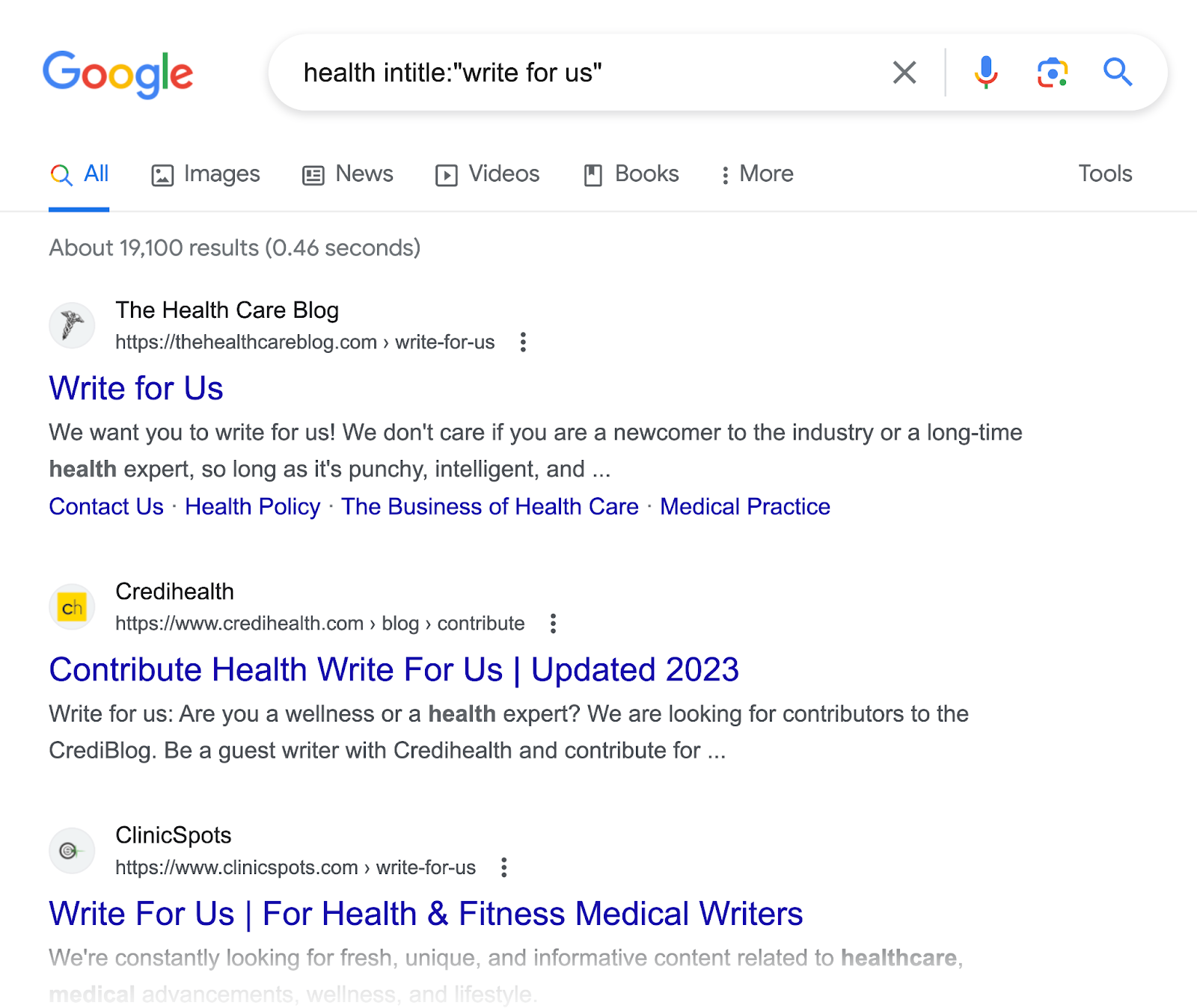

3. Find Websites for Guest Posting

Guest posting is where you write blog posts for other websites in your niche to promote your brand.

Many SEO marketers also use guest posting to build links to their site, according to a recent Twitter poll.

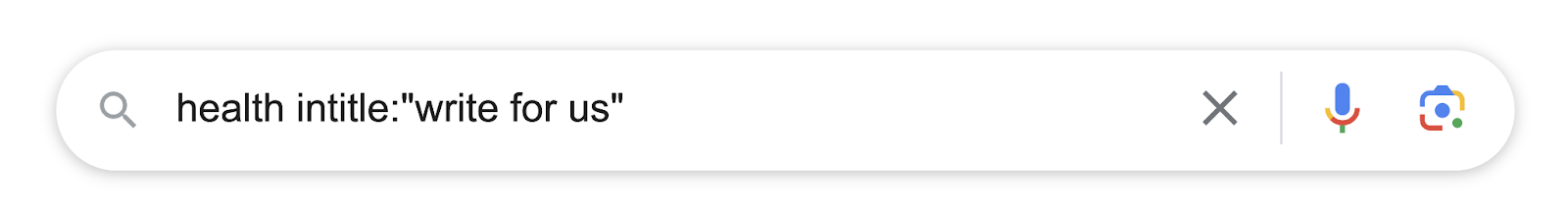

To find relevant guest posting opportunities for your website, search Google with one of these search operators:

- Your keyword intitle:“write for us”

- Your keyword intitle:“become an author”

- Your keyword intitle:”contribute”

- Your keyword intitle:“guest article”

- Your keyword intitle:“submit a post”

- Your keyword intitle:“submit an article”

These operators will return sites that accept guest posts within your niche.

For example, if we want to find guest posting opportunities for health and fitness websites, we’ll search Google like this:

And it will show websites accepting guest posts.

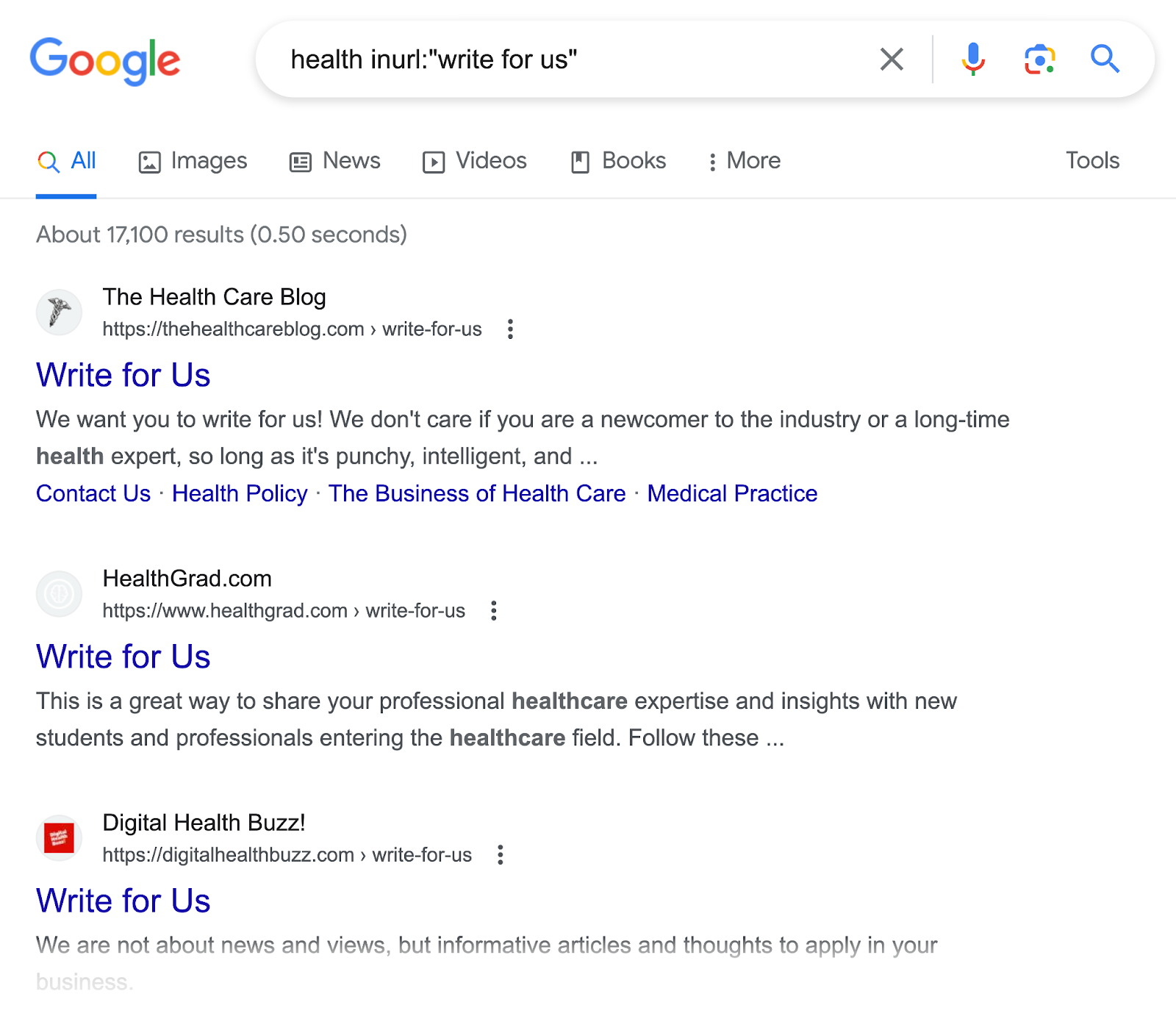

Alternatively, you could also use the “inurl:” search operator.

- Your keyword inurl:“write for us”

- Your keyword inurl:“become an author”

- Your keyword inurl:”contribute”

- Your keyword inurl:“guest article”

- Your keyword inurl:“submit a post”

- Your keyword inurl:“submit an article”

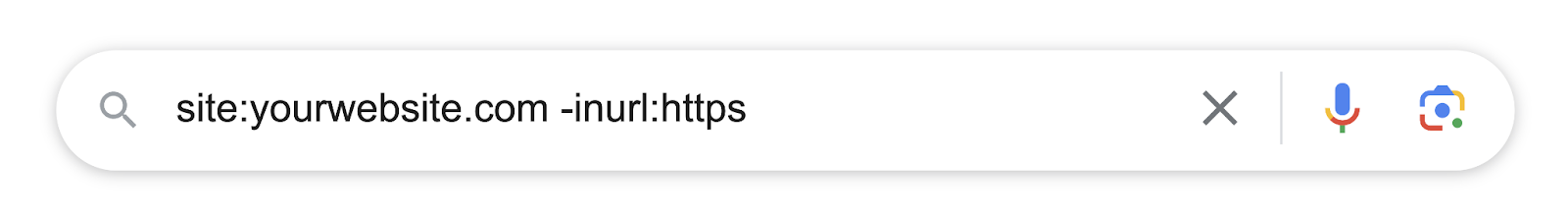

4. Find Non-Secure Pages on Your Domain

Any pages on your website that are still using HTTP (hypertext transfer protocol) are not secure for visitors.

Their sensitive information like password and credit details can potentially be intercepted and stolen by hackers.

That’s why it’s important to switch to HTTPS (HTTP secure). Which is also a ranking factor for Google.

To find non-secure pages on your website, combine the “site:” and “-inurl:” operators.

Like this:

Let’s deconstruct the Google search syntax we’re using.

Here, we’re using:

- The “site:” operator so Google looks at your entire website

- Then the exclusionary “-inurl:” operator so it only shows non-secure pages (still using HTTP)

This should reveal any non-secure pages you may have on your site.

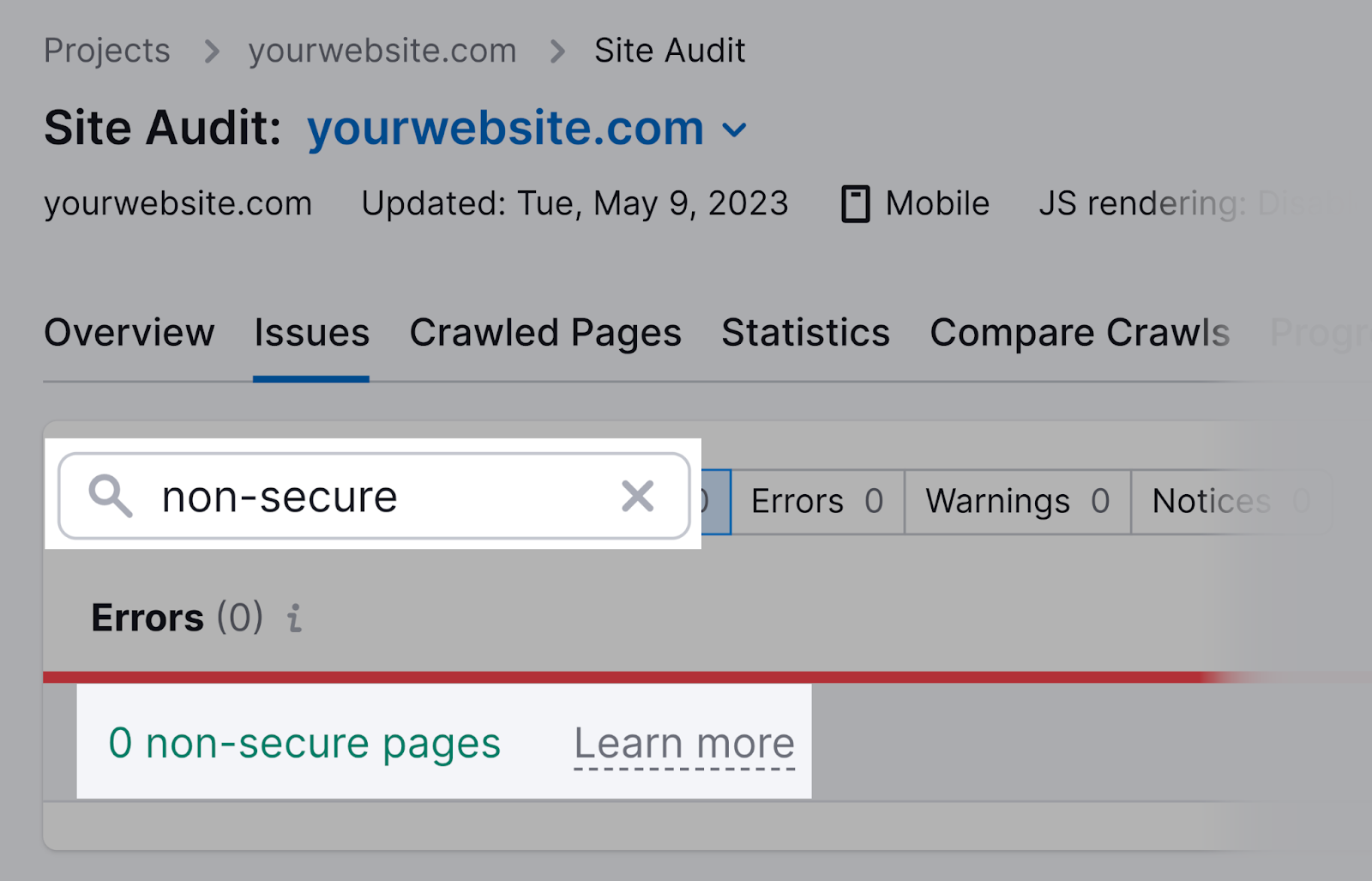

Alternatively, Semrush’s Site Audit tool can identify non-secure pages.

Set up a project in the tool and audit your website.

After the audit is complete, go to the “Issues” tab and search for “non-secure.” The tool will show if it detected any non-secure pages.

5. Find Resource Pages for Link Building

Resource pages are webpages that curate and link out to useful industry resources. Like articles or tools.

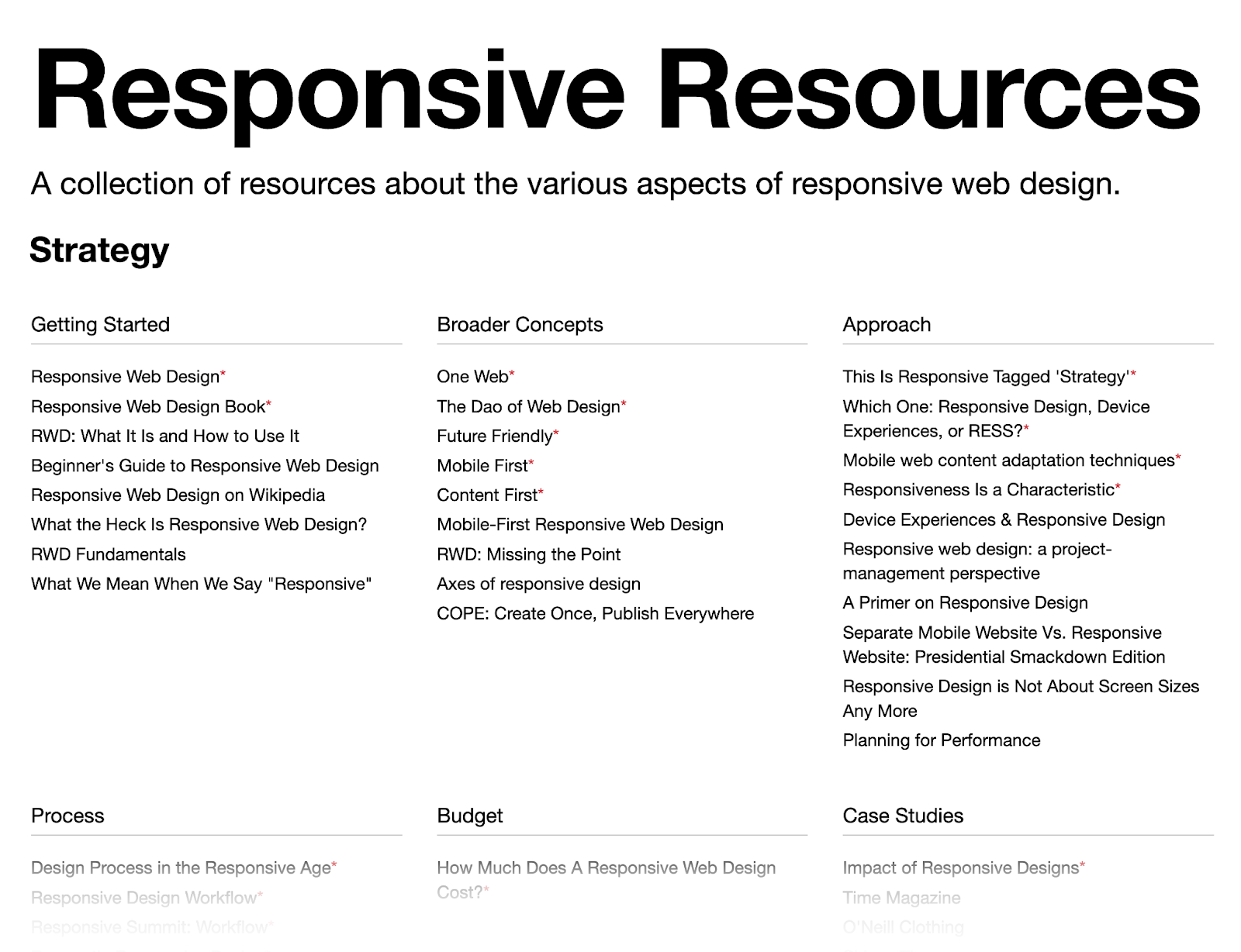

Here’s what a resource page looks like:

Reach out to the people who created the resource page and suggest your resource for inclusion.

If they decide to add it, you could potentially receive a backlink as well.

But how do you find websites that curate resource pages?

Google search operators can help.

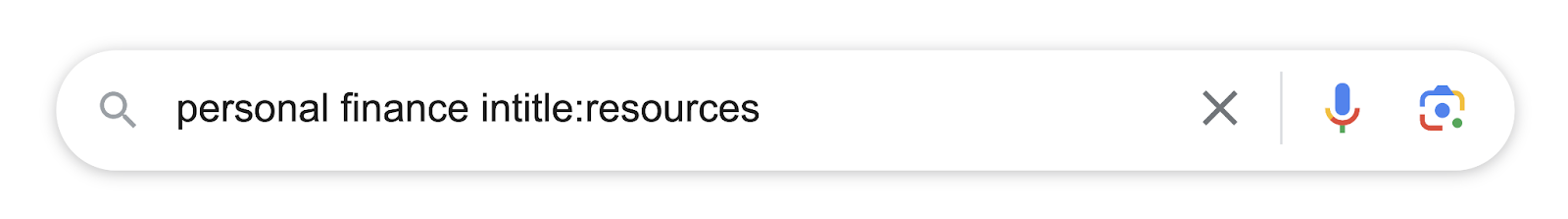

Search Google with one of these Google search strings:

- Your keyword intitle:resources

- Your keyword intitle:links

- Your keyword inurl:resources

- Your keyword inurl:links

All these operators will return sites that curate and link out to relevant resources in your niche.

For example, if we want to find resource page opportunities for personal finance websites, we’ll search Google like this:

And it will show websites curating resources.

6. Track Down Duplicate Content Issues

Duplicate content is when the exact same content appears on the web in more than one place.

It could be on your website: Two or more pages on your website display the same content.

Or someone else’s website: Some other website copy-and-pasted your content on their site.

That’s bad.

Luckily, search operators can help you find duplicate content issues.

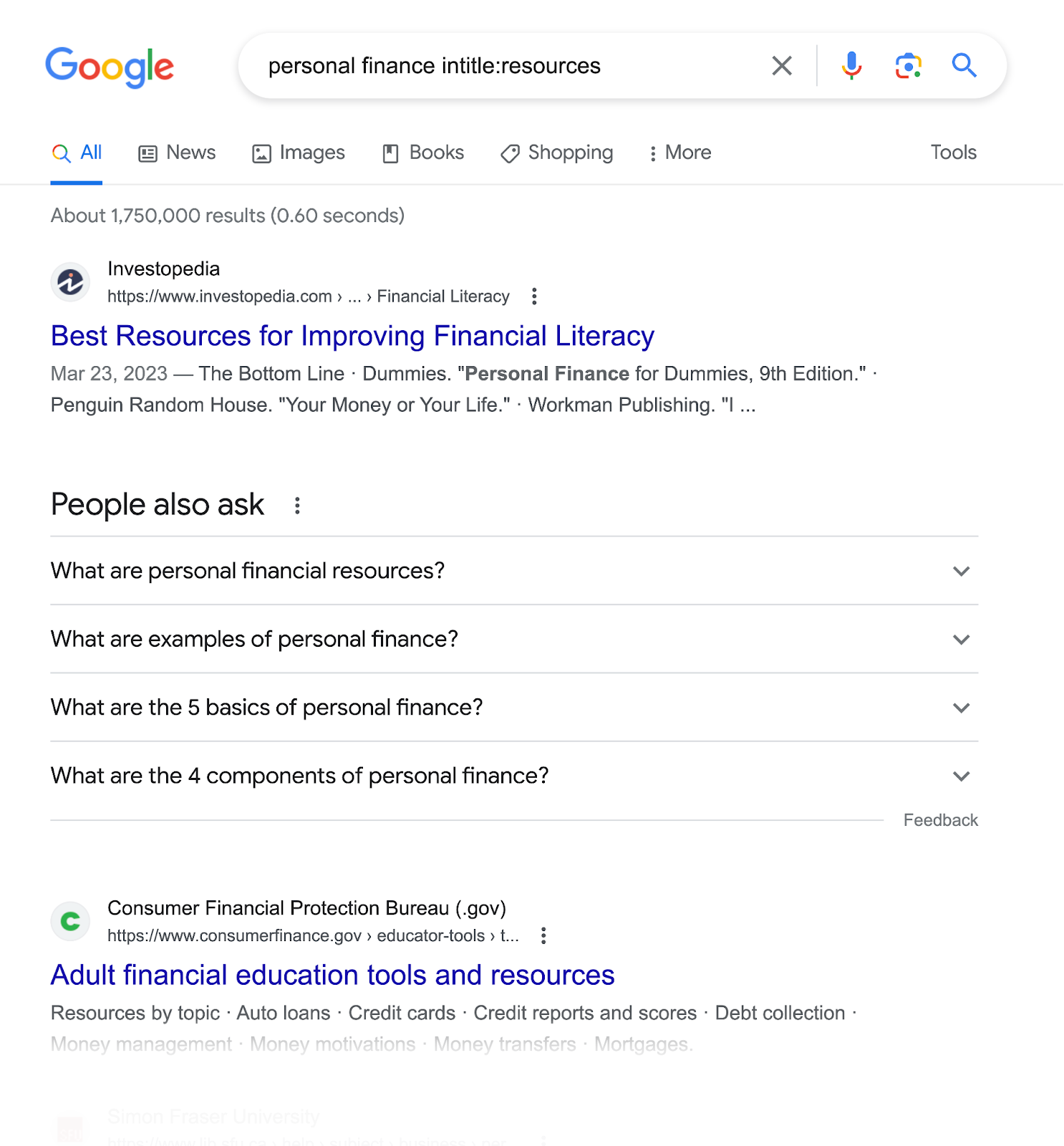

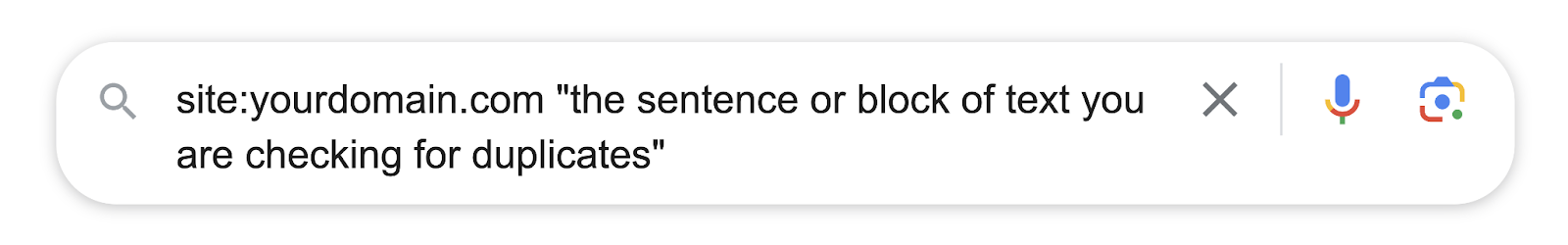

To see whether anyone has duplicated your content, use Google operators like this:

Here, we’re using the exclusionary “-site:” operator so results from your own site aren’t included.

Then the quotation marks (“”) to see if your exact sentence or block of text appears anywhere else on the web.

If you notice your content is duplicated elsewhere, reach out to those sites and make sure they link back to your site with proper attribution.

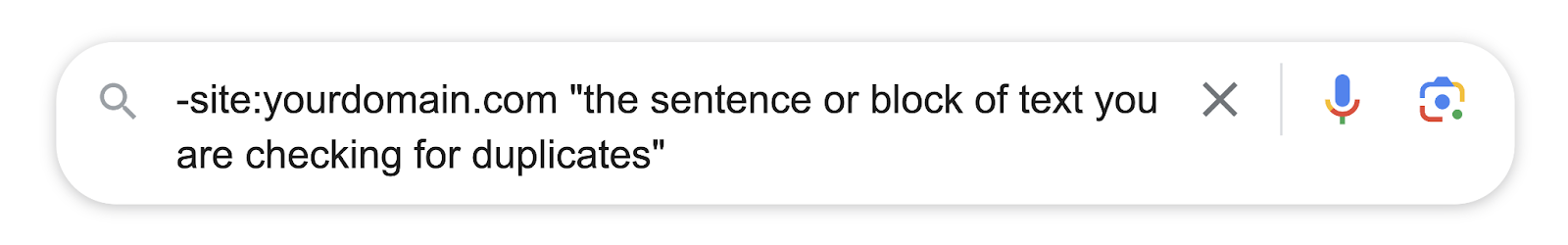

Now, to find duplicate content on your own website, use the following operators.

We’re using the “site:” operator to return results for your site only.

Then the quotation marks (“”) to see if there are multiple matching results present.

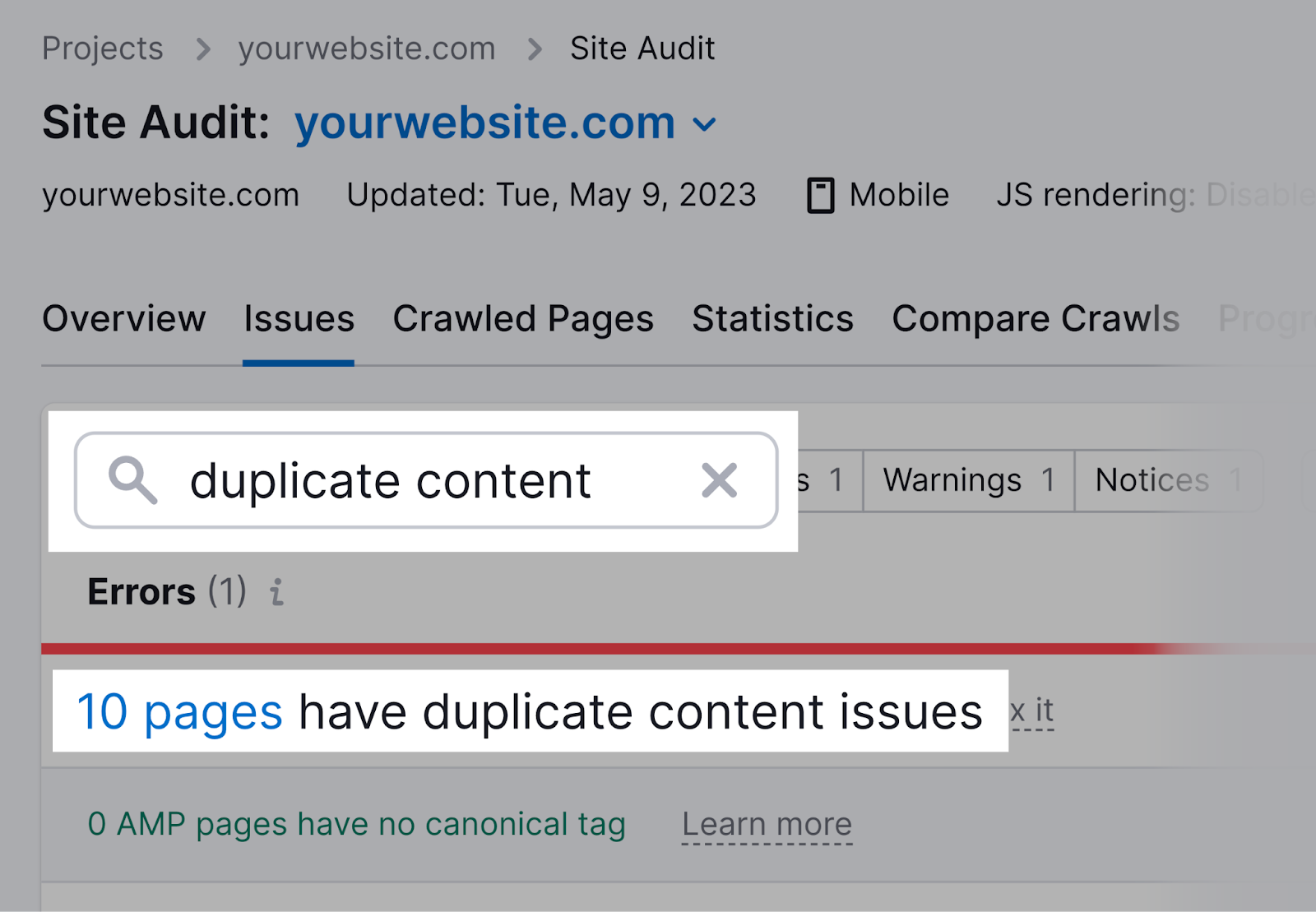

Also, Semrush’s Site Audit tool can find duplicate content issues and save you from manual search work.

Set up a project in the tool and run a full crawl of your site.

Once the crawl is complete, navigate to the “Issues” tab. Then, search for “duplicate content.”

The tool will show if you have duplicate content on your own site.

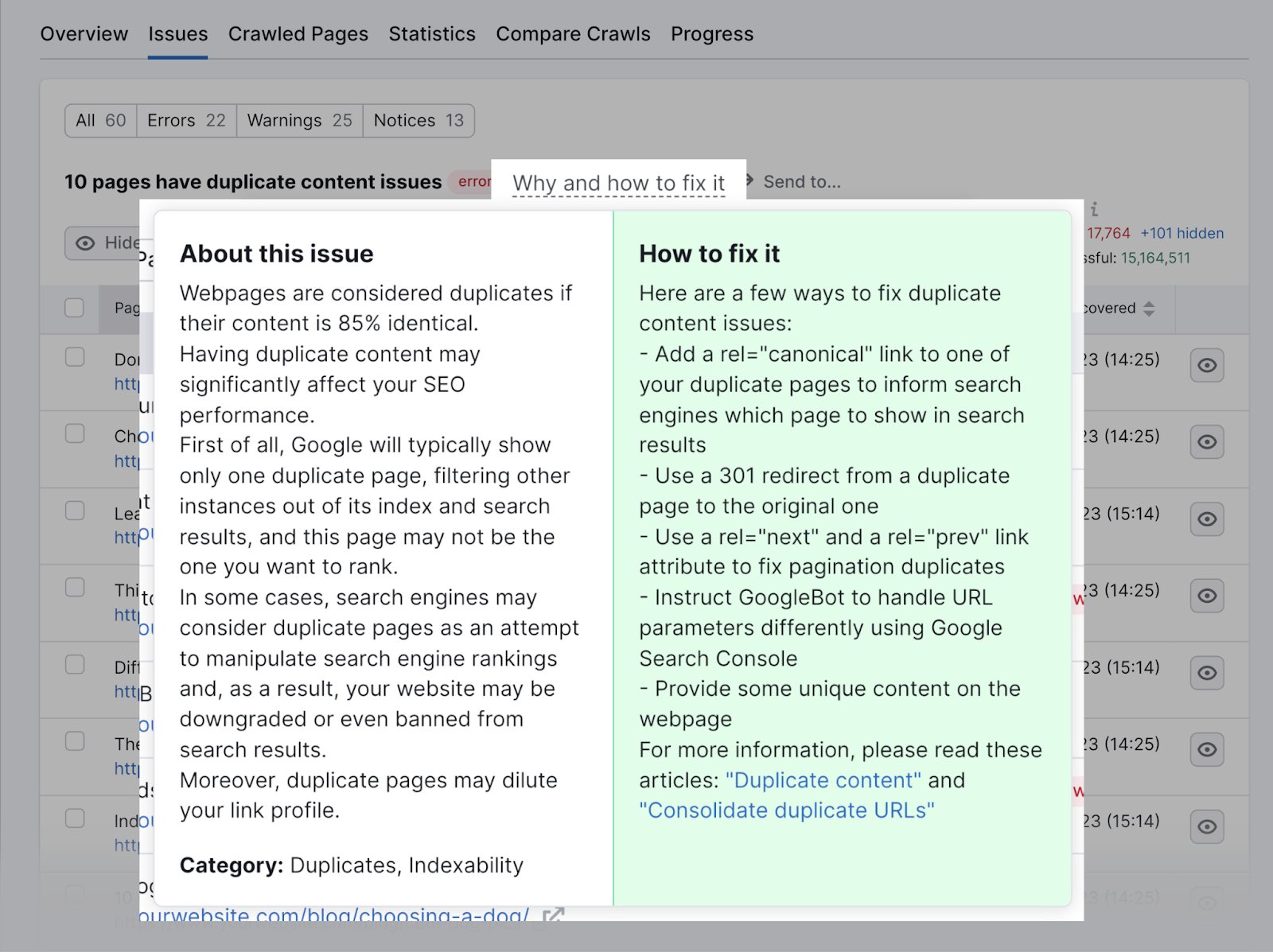

Click on the “# pages” link to see a full list of pages with duplicate content. Then click on the “Why and how to fix it” button at the top of the page to get advice on how to fix it.

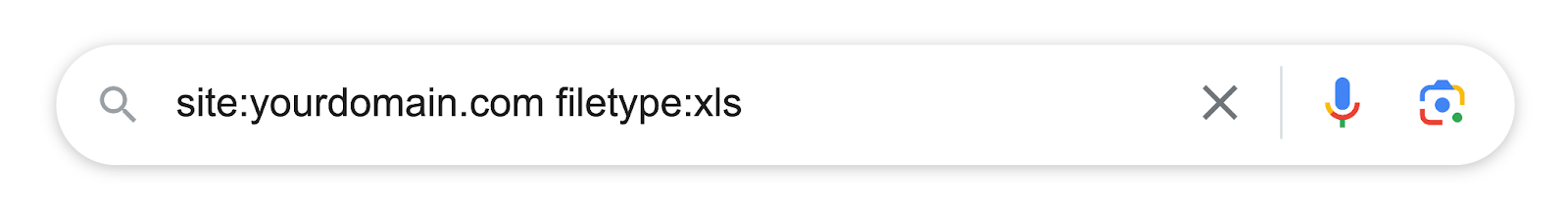

7. Find Files You Don’t Want to Keep in Google’s Index

If you use special content assets (PDF files, Excel spreadsheet templates, etc.) as your lead magnets, you probably don’t want them appearing in Google search results.

That’s because users can freely access them without exchanging their contact information with your business.

This could lead to a decrease in lead generation and sales.

To check whether Google has indexed your lead magnet files, use the “site:” and “filetype:” operators.

Like this:

If you see that your lead magnet files are in Google’s index, remove them by adding the “noindex” attribute.

New to the “noindex” attribute? Read our full guide to meta robots and x-robots-tag to get started.

8. Search Outreach Prospects’ Social Media Profiles

Many of the most useful link building strategies revolve around outreach.

It’s where you contact website owners—via social media, email, etc.—and give them a compelling reason to link to your content.

Use Google search operators to find your prospects’ social media profiles.

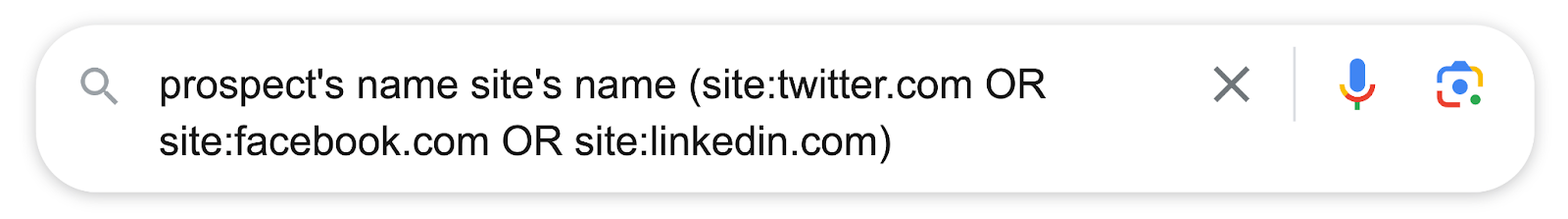

Here’re the search operators you can use:

In this example, we’re using the parentheses () to group multiple operators together.

Then the “OR” operator to trigger your prospect’s profiles on any of the specified social media platforms.

So you can contact them there.

Master the Google Search Operators List

Learning how to use Google search commands is a skill. It takes time and practice to master.

But the benefits are well worth the effort.

With Google search modifiers, you can narrow your search and filter out unwanted or irrelevant results.

These operators also help you carry out regular SEO activities.

To help you master Google search operators, we’ve put together a downloadable cheat sheet.

It’s a handy reference guide to the most useful search operators.

Download our cheat sheet and start mastering Google search operators today.